AI

AI and machine learning on Linux — deploy LLMs, GPU setup, self-hosted AI tools, and intelligent automation for sysadmins and DevOps engineers.

Ollama API Rate Limiting and Load Balancing on Linux

Protect and scale Ollama deployments on Linux with nginx rate limiting, upstream load balancing, health checks, and...

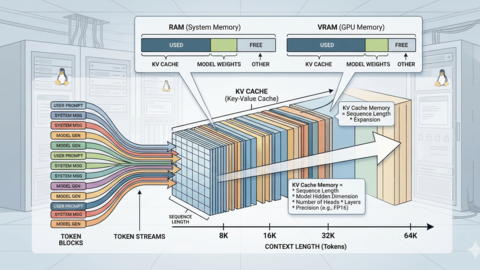

LLM Context Windows Explained: How Token Limits Affect Linux Server RAM

How LLM context windows impact Linux server RAM and VRAM. Covers token counting, KV cache memory calculations, Ollama...

LLM Benchmarking on Linux: How to Test and Compare Model Performance

Benchmark LLMs on Linux with repeatable methodology. Covers tokens/sec measurement, llama-bench, Ollama timing, VRAM...

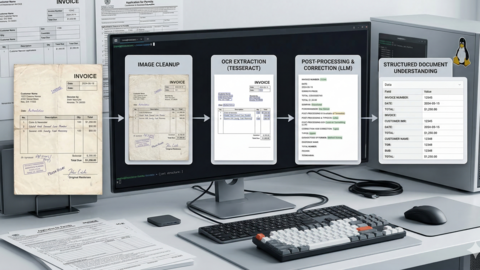

AI Document OCR on Linux: Open Source Pipeline with Tesseract and LLMs

Build an AI-enhanced OCR pipeline on Linux using Tesseract for text extraction and local LLMs for intelligent document...

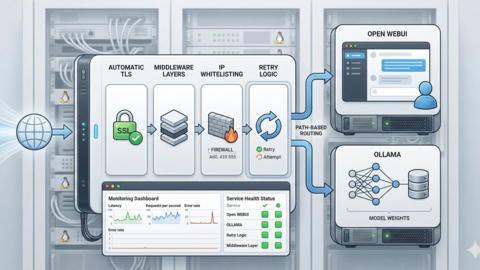

Traefik Reverse Proxy for Ollama and Open WebUI on Linux

Configure Traefik as a reverse proxy for Ollama and Open WebUI on Linux. Covers automatic TLS with Let's Encrypt,...

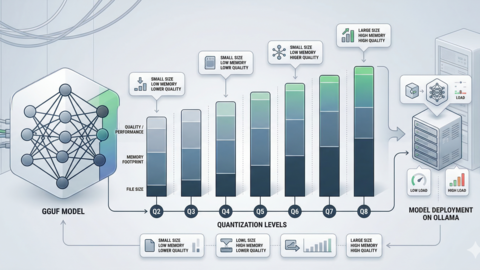

GGUF Model Format Explained: Quantization Guide for Ollama Users

Understanding GGUF and quantization for Ollama. Covers Q2 through F16 quantization levels, file sizes, memory...

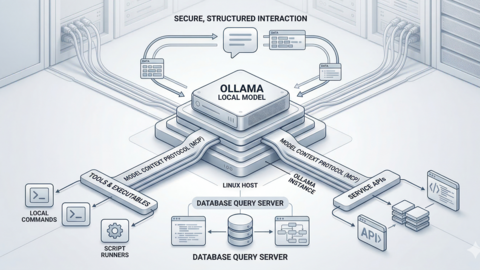

Model Context Protocol (MCP) on Linux with Ollama: Connect AI to Your Tools

Implement Model Context Protocol (MCP) on Linux to connect Ollama LLMs to external tools, databases, and APIs. Covers...

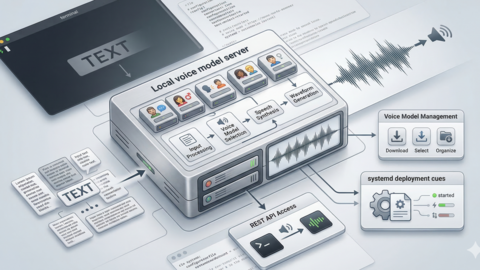

Piper TTS on Linux: Build a Self-Hosted Text-to-Speech Server

Deploy Piper text-to-speech on Linux with no cloud dependencies. Build a fast, private TTS server using systemd, a REST...

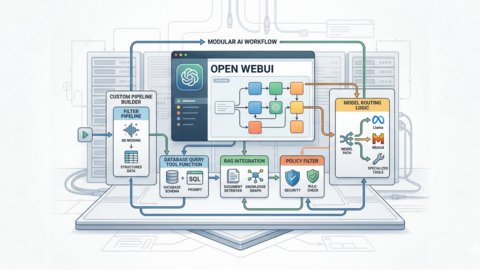

Open WebUI Custom Pipelines and Functions on Linux

Build custom pipelines and functions in Open WebUI on Linux. Create filter pipelines, RAG integrations, API-connected...

Flux Image Generation on Linux: Self-Hosted AI Art Server

Set up a self-hosted Flux image generation server on Linux. Covers ComfyUI and API-based workflows, GPU requirements,...

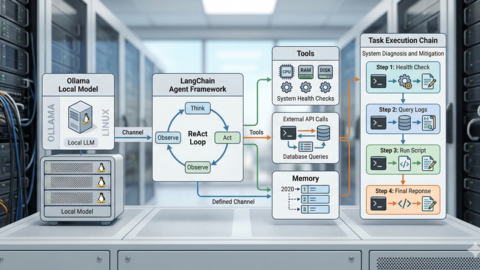

Ollama and LangChain on Linux: Build AI Agents with Local Models

Build autonomous AI agents on Linux using LangChain and Ollama. Covers tool-calling, ReAct patterns, memory chains, and...

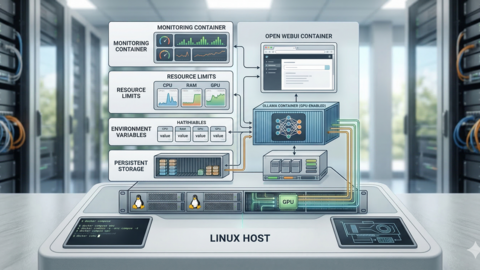

Ollama Docker Compose: Complete GPU Stack for Linux

Deploy Ollama with Docker Compose on Linux with full NVIDIA GPU passthrough. Covers multi-container stacks, persistent...