AI

AI and machine learning on Linux — deploy LLMs, GPU setup, self-hosted AI tools, and intelligent automation for sysadmins and DevOps engineers.

NVIDIA Container Toolkit on Linux: GPU Setup for Docker AI Workloads

Complete guide to installing the NVIDIA Container Toolkit on Ubuntu, Debian, Fedora, and RHEL. Configure Docker and...

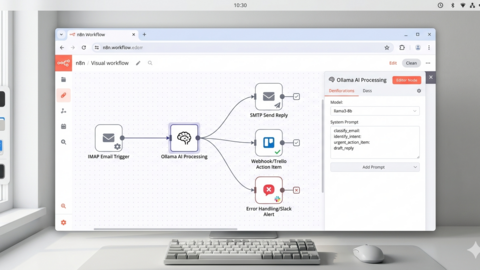

n8n and Ollama: Build Self-Hosted AI Automation Workflows on Linux

Connect n8n workflow automation to local Ollama for private AI-powered workflows on Linux. Build an email summarizer,...

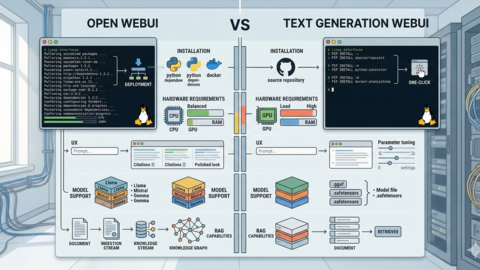

Open WebUI vs Text Generation WebUI: Side-by-Side Linux Server Comparison

A practical comparison of Open WebUI and Text Generation WebUI on Linux servers, covering installation, hardware needs,...

NVIDIA Tesla P40 and Ollama: Budget LLM Server Build Guide for Linux

Build a 24 GB VRAM LLM inference server for under $350 using a used NVIDIA Tesla P40. Complete parts list, driver...

AMD ROCm and Ollama on Linux: Complete GPU Setup Guide

Full guide to running Ollama on AMD GPUs with ROCm 6.x on Ubuntu and RHEL. Covers supported GPUs,...

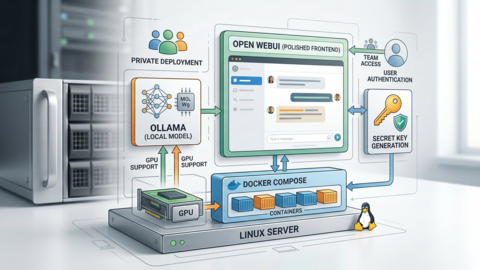

Deploy a Private ChatGPT on Your Linux Server with Ollama and Open WebUI

Step-by-step guide to deploying a private, self-hosted ChatGPT alternative on Ubuntu 24.04 with Ollama, Open WebUI,...

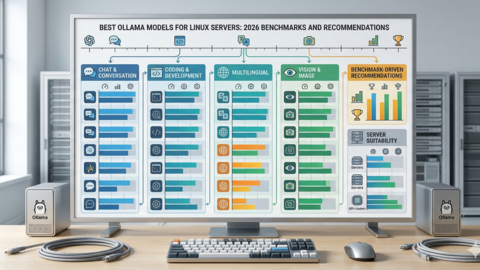

Best Ollama Models for Linux Servers: 2026 Benchmarks and Recommendations

Comprehensive benchmarks of the best Ollama models for Linux servers in 2026. Covers chat, coding, small, vision, and...

Ollama vs OpenAI API: True Cost Comparison and When Self-Hosting Wins

Real cost breakdown of self-hosted Ollama versus OpenAI API at 100, 1K, and 10K daily requests. Hardware costs,...

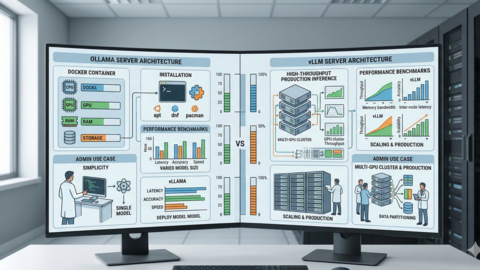

Ollama vs vLLM: Which LLM Server Should Linux Admins Choose?

A head-to-head comparison of Ollama and vLLM for Linux administrators: architecture, installation, benchmarks, GPU...

Ollama GPU Memory Not Enough: Complete Troubleshooting Guide for Linux

Fix Ollama GPU memory errors on Linux with VRAM diagnostics, quantization tuning, GPU/CPU offloading, multi-GPU...

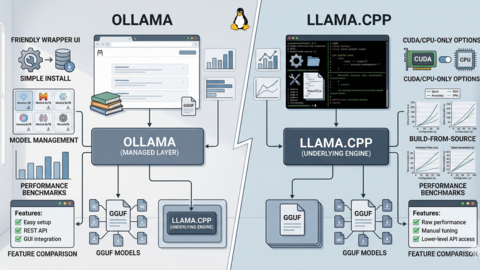

Ollama vs llama.cpp: Performance, Setup, and When to Use Each on Linux

Comparing Ollama and llama.cpp on Linux: architecture relationship, build-from-source guide with CUDA, performance...

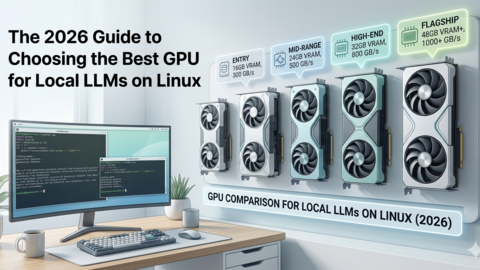

Best GPU for Running LLMs Locally on Linux: 2026 Buyer's Guide

Practical breakdown of the best GPU for running LLM locally in 2026. Covers VRAM tiers, Linux driver support, Ollama...