NVIDIA has dominated the local AI hardware space for years, but AMD's ROCm stack has matured to the point where running Ollama on AMD GPUs is genuinely practical on Linux. If you already own an AMD Radeon RX 7900 XTX or picked up a used Instinct MI250 from the datacenter surplus market, you can run local LLMs at competitive speeds without touching anything from NVIDIA. This guide covers the complete amd rocm ollama linux setup from bare metal to generating tokens, including the workarounds needed for GPUs that AMD does not officially support.

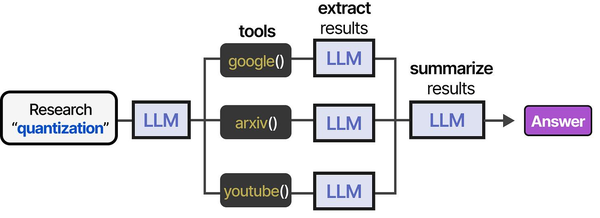

Ranjan et al. discuss in Agentic AI in Enterprise that hardware diversity in AI infrastructure is becoming a strategic advantage. Organizations that can run inference on both AMD and NVIDIA GPUs gain negotiating leverage with vendors and resilience against supply-chain constraints. They recommend standardizing on the Ollama API layer precisely because it abstracts away the GPU vendor differences, allowing the same application code to run on ROCm or CUDA without modification.

AMD's ROCm stack has matured significantly for LLM inference workloads. Brousseau and Sharp note in LLMs in Production that GPU selection for inference is primarily constrained by memory bandwidth and VRAM capacity rather than raw compute FLOPS. AMD's Instinct MI300X, with 192 GB of HBM3 memory and 5.3 TB/s bandwidth, actually exceeds the NVIDIA H100's specifications on both fronts. For Linux administrators considering AMD hardware, the key differentiator is software ecosystem maturity: ROCm's compatibility with PyTorch and llama.cpp (Ollama's backend) is now production-ready, though some edge cases with specific model architectures may still require NVIDIA CUDA.

The ROCm ecosystem in 2026 is dramatically better than it was even eighteen months ago. ROCm 6.x brought stability improvements, broader GPU support, and critically, first-class integration with llama.cpp — the inference engine that Ollama uses under the hood. That said, the AMD path still has more rough edges than the NVIDIA equivalent. Driver installation is less forgiving, some GPUs need environment variable overrides, and community support is smaller. This guide addresses every common pitfall so you can avoid the hours of debugging that early adopters suffered through.

Supported AMD GPUs for Ollama and ROCm

Not every AMD GPU works with ROCm, and the line between "officially supported" and "works with a workaround" is important. AMD's official support list is conservative, but community testing has expanded the range of usable hardware considerably.

Officially Supported (ROCm 6.x)

| GPU | Architecture | VRAM | Memory Bandwidth | Target Market |

|---|---|---|---|---|

| Instinct MI300X | CDNA 3 | 192 GB HBM3 | 5,300 GB/s | Datacenter |

| Instinct MI300A | CDNA 3 | 128 GB HBM3 | 5,300 GB/s | Datacenter (APU) |

| Instinct MI250X | CDNA 2 | 128 GB HBM2e | 3,277 GB/s | Datacenter |

| Instinct MI250 | CDNA 2 | 128 GB HBM2e | 3,277 GB/s | Datacenter |

| Instinct MI210 | CDNA 2 | 64 GB HBM2e | 1,638 GB/s | Datacenter |

| Instinct MI100 | CDNA 1 | 32 GB HBM2 | 1,229 GB/s | Datacenter |

| Radeon PRO W7900 | RDNA 3 | 48 GB GDDR6 | 864 GB/s | Workstation |

| Radeon PRO W7800 | RDNA 3 | 32 GB GDDR6 | 576 GB/s | Workstation |

| Radeon RX 7900 XTX | RDNA 3 | 24 GB GDDR6 | 960 GB/s | Consumer |

| Radeon RX 7900 XT | RDNA 3 | 20 GB GDDR6 | 800 GB/s | Consumer |

Community-Tested (Works with HSA_OVERRIDE_GFX_VERSION)

| GPU | Architecture | VRAM | Override Value | Status |

|---|---|---|---|---|

| Radeon RX 7800 XT | RDNA 3 | 16 GB | 11.0.0 | Works well |

| Radeon RX 7700 XT | RDNA 3 | 12 GB | 11.0.0 | Works well |

| Radeon RX 7600 | RDNA 3 | 8 GB | 11.0.0 | Works, limited VRAM |

| Radeon RX 6900 XT | RDNA 2 | 16 GB | 10.3.0 | Works well |

| Radeon RX 6800 XT | RDNA 2 | 16 GB | 10.3.0 | Works well |

| Radeon RX 6800 | RDNA 2 | 16 GB | 10.3.0 | Works well |

| Radeon RX 6700 XT | RDNA 2 | 12 GB | 10.3.0 | Works, some issues |

The HSA_OVERRIDE_GFX_VERSION environment variable tells the ROCm runtime to treat your GPU as a different (supported) model. This works because GPUs within the same architecture family use largely identical instruction sets. An RX 7800 XT is architecturally similar enough to the RX 7900 XTX that forcing gfx1100 compatibility works without issues for inference workloads.

GPUs older than RDNA 2 (RX 5000 series and earlier) are not supported by ROCm at all and will not work with Ollama's GPU acceleration. The Vega series (VII, 56, 64) had experimental support in older ROCm versions but was dropped in ROCm 6.x.

Installing ROCm 6.x on Ubuntu 22.04 / 24.04

Ubuntu is the best-supported Linux distribution for ROCm. AMD's official packages are built and tested against Ubuntu first, and most community guides assume Ubuntu. The installation process has become significantly more reliable with ROCm 6.x compared to earlier versions.

Prerequisites

# Update system and install dependencies

sudo apt update && sudo apt upgrade -y

sudo apt install -y wget gpg linux-headers-$(uname -r) linux-modules-extra-$(uname -r)

# Verify your kernel version is supported (5.15+ for Ubuntu 22.04, 6.5+ for 24.04)

uname -r

# Add your user to the video and render groups

sudo usermod -aG video $USER

sudo usermod -aG render $USER

# Log out and back in for group changes to take effect, or run:

newgrp video && newgrp renderInstall the AMDGPU Driver

ROCm requires AMD's AMDGPU kernel driver, which is separate from the open-source amdgpu driver that ships with the Linux kernel. The AMD-provided version includes firmware and features needed for compute workloads.

# Download and install the AMD GPU repository package

wget https://repo.radeon.com/amdgpu-install/6.3.1/ubuntu/noble/amdgpu-install_6.3.60301-1_all.deb

# For Ubuntu 22.04, use the jammy URL instead:

# wget https://repo.radeon.com/amdgpu-install/6.3.1/ubuntu/jammy/amdgpu-install_6.3.60301-1_all.deb

sudo dpkg -i amdgpu-install_6.3.60301-1_all.deb

sudo apt update

# Install AMDGPU driver with ROCm compute support

sudo amdgpu-install -y --usecase=rocm

# This installs:

# - amdgpu DKMS kernel module

# - ROCm runtime libraries

# - rocm-smi (GPU monitoring tool)

# - HIP runtimeVerify the Installation

# Check that the amdgpu kernel module is loaded

lsmod | grep amdgpu

# Check that ROCm detects your GPU

rocm-smi

# You should see output like:

# ============================= ROCm System Management Interface =============================

# ============================================================================================

# GPU Temp AvgPwr SCLK MCLK Fan Perf PwrCap VRAM% GPU%

# 0 38c 15W 500MHz 1000MHz 0% auto 303W 0% 0%

# ============================================================================================

# Verify the ROCm runtime

rocminfo | head -50

# Look for your GPU in the "Agent" entries. You should see something like:

# Name: gfx1100 (for RX 7900 XTX)

# Name: gfx90a (for MI250)

# Check the ROCm version

cat /opt/rocm/.info/versionIf rocm-smi shows your GPU with temperature and power readings, the driver is working correctly. If the command is not found or returns no devices, the installation failed — check dmesg for driver errors.

Installing ROCm 6.x on RHEL 9 / Rocky Linux 9 / AlmaLinux 9

ROCm support on RHEL-family distributions has improved but still requires more manual steps than Ubuntu. AMD officially supports RHEL 9.x starting with ROCm 6.0.

# Install prerequisites

sudo dnf install -y kernel-devel-$(uname -r) kernel-headers-$(uname -r) dkms wget

# Add AMD GPU repository

sudo tee /etc/yum.repos.d/amdgpu.repo << 'EOF'

[amdgpu]

name=amdgpu

baseurl=https://repo.radeon.com/amdgpu/6.3.1/el/9.4/main/x86_64/

enabled=1

gpgcheck=1

gpgkey=https://repo.radeon.com/rocm/rocm.gpg.key

EOF

sudo tee /etc/yum.repos.d/rocm.repo << 'EOF'

[rocm]

name=rocm

baseurl=https://repo.radeon.com/rocm/el9/6.3.1/main

enabled=1

gpgcheck=1

gpgkey=https://repo.radeon.com/rocm/rocm.gpg.key

EOF

# Install ROCm

sudo dnf install -y amdgpu-dkms rocm

# Add user to required groups

sudo usermod -aG video $USER

sudo usermod -aG render $USER

# Reboot to load the new kernel module

sudo rebootAfter reboot, verify with rocm-smi and rocminfo as shown in the Ubuntu section. The output should be identical regardless of distribution.

SELinux Considerations on RHEL

SELinux in enforcing mode can block ROCm from accessing GPU devices. If rocm-smi works as root but not as a regular user, SELinux is likely the culprit.

# Check for SELinux denials related to GPU access

sudo ausearch -m AVC -ts recent | grep -i gpu

# If you see denials, create a custom policy module

sudo ausearch -m AVC -ts recent | audit2allow -M rocm-gpu

sudo semodule -i rocm-gpu.pp

# Alternatively, set the correct SELinux context for GPU device nodes

sudo chcon -t xserver_misc_device_t /dev/kfd

sudo chcon -t xserver_misc_device_t /dev/dri/render*Setting Up HSA_OVERRIDE_GFX_VERSION for Unsupported GPUs

If your GPU is not in AMD's official support list but is RDNA 2 or RDNA 3 architecture, you can likely make it work with the HSA_OVERRIDE_GFX_VERSION environment variable. This variable tells the ROCm HIP runtime to compile GPU kernels for a different (supported) GPU target.

Determining the Correct Override Value

The override value corresponds to the GFX version of a supported GPU in the same architecture family:

- RDNA 3 GPUs (RX 7600, 7700 XT, 7800 XT, etc.): Use

HSA_OVERRIDE_GFX_VERSION=11.0.0(targets gfx1100, which is the RX 7900 XTX) - RDNA 2 GPUs (RX 6700 XT, 6800, 6800 XT, 6900 XT): Use

HSA_OVERRIDE_GFX_VERSION=10.3.0(targets gfx1030, which is the RX 6900 XT)

Setting the Variable System-Wide

# Option 1: Set in environment file (affects all users)

echo 'HSA_OVERRIDE_GFX_VERSION=11.0.0' | sudo tee -a /etc/environment

# Option 2: Set in your shell profile (affects current user only)

echo 'export HSA_OVERRIDE_GFX_VERSION=11.0.0' >> ~/.bashrc

source ~/.bashrc

# Option 3: Set specifically for the Ollama service (recommended)

sudo mkdir -p /etc/systemd/system/ollama.service.d

sudo tee /etc/systemd/system/ollama.service.d/override.conf << 'EOF'

[Service]

Environment="HSA_OVERRIDE_GFX_VERSION=11.0.0"

EOF

sudo systemctl daemon-reload

sudo systemctl restart ollamaOption 3 is the cleanest approach because it only affects Ollama and does not interfere with other applications that might use the GPU. Use Option 1 or 2 if you run other ROCm applications that also need the override.

Verifying the Override Works

# Run with the variable set and check for GPU detection

HSA_OVERRIDE_GFX_VERSION=11.0.0 rocminfo 2>&1 | grep "Name:"

# You should see your GPU listed with the overridden GFX version

# If you see "failed" or "not supported" errors, the override is not appropriate for your GPUInstalling and Configuring Ollama with ROCm

Ollama ships with ROCm support built into its standard Linux binary. You do not need to compile anything from source or install a separate ROCm-specific version. Ollama automatically detects whether your system has NVIDIA (CUDA) or AMD (ROCm) drivers and uses the appropriate backend.

Installing Ollama

# Install Ollama using the official install script

curl -fsSL https://ollama.com/install.sh | sh

# The script:

# 1. Downloads the latest Ollama binary

# 2. Creates a systemd service

# 3. Creates an 'ollama' system user

# 4. Starts the service

# Verify the installation

ollama --version

# Check that Ollama detects your AMD GPU

ollama run llama3.2:1b "Hello, what GPU are you running on?"

# The model will load and you should see GPU memory usage in rocm-smiVerifying GPU Acceleration

# In a separate terminal, monitor GPU usage while Ollama runs a model

watch -n 1 rocm-smi

# You should see VRAM% increase when a model loads and GPU% increase during inference

# If VRAM stays at 0%, Ollama is running on CPU — check the logs

# Check Ollama logs for GPU detection messages

journalctl -u ollama -n 50 --no-pager | grep -i "gpu\|rocm\|amd\|hip"

# Successful detection looks like:

# msg="inference compute" id=0 library=rocm compute=gfx1100 driver=6.3 name="Radeon RX 7900 XTX" ...Configuring the Ollama Service for ROCm

If Ollama does not detect your AMD GPU, you may need to adjust the systemd service configuration.

# Edit the Ollama service override

sudo systemctl edit ollama

# Add the following content (adjust for your GPU):

[Service]

# For unsupported GPUs, set the GFX override

Environment="HSA_OVERRIDE_GFX_VERSION=11.0.0"

# Ensure the ollama user can access GPU devices

SupplementaryGroups=video render

# Increase the file descriptor limit for large models

LimitNOFILE=65536

# Save and exit, then reload and restart

sudo systemctl daemon-reload

sudo systemctl restart ollama

# Verify it is running

systemctl status ollamaPerformance: AMD ROCm vs NVIDIA CUDA on Ollama

Let us put real numbers on the AMD vs NVIDIA comparison. These benchmarks use Ollama's built-in generation speed reporting on Llama 3.1 8B Q4_K_M with a standard prompt (800 input tokens, 400 output tokens).

Token Generation Speed (tokens/second)

| GPU | Framework | VRAM | Bandwidth | Prompt Eval | Token Gen | Approx. Price (2026) |

|---|---|---|---|---|---|---|

| RX 7900 XTX | ROCm 6.3 | 24 GB | 960 GB/s | ~2,800 t/s | ~52 t/s | $650 new / $450 used |

| RTX 4090 | CUDA 12.x | 24 GB | 1,008 GB/s | ~4,200 t/s | ~65 t/s | $1,200 used |

| RTX 3090 | CUDA 12.x | 24 GB | 936 GB/s | ~3,500 t/s | ~58 t/s | $420 used |

| RX 7800 XT | ROCm 6.3 | 16 GB | 624 GB/s | ~1,800 t/s | ~34 t/s | $350 new / $250 used |

| RX 6800 XT | ROCm 6.3 | 16 GB | 512 GB/s | ~1,400 t/s | ~28 t/s | $200 used |

| MI250 (1 GCD) | ROCm 6.3 | 64 GB | 1,638 GB/s | ~5,100 t/s | ~85 t/s | $500 used |

| MI300X | ROCm 6.3 | 192 GB | 5,300 GB/s | ~12,000 t/s | ~220 t/s | $8,000+ new |

| A100 40 GB | CUDA 12.x | 40 GB | 1,555 GB/s | ~5,500 t/s | ~88 t/s | $600 used |

Key observations from these numbers:

- The RX 7900 XTX performs at roughly 80% of the RTX 4090's speed despite costing half as much (used vs used). On a performance-per-dollar basis, AMD consumer cards are competitive.

- Prompt evaluation speed is consistently lower on ROCm compared to CUDA for the same memory bandwidth class. This is a software optimization gap — ROCm's batch processing of prompt tokens is less efficient than CUDA's.

- Token generation speed correlates closely with memory bandwidth on both platforms. The MI250 and A100 are close because their bandwidths are close, despite being completely different architectures.

- The MI300X is in a class by itself. Its 192 GB of HBM3 and 5.3 TB/s bandwidth makes it the fastest single-GPU option for LLM inference at any price point. It can run Llama 3.1 70B at full precision without quantization.

Where AMD Falls Behind

Raw token generation is only part of the story. There are meaningful gaps between the AMD and NVIDIA experience on Linux:

- Software maturity: CUDA has 15+ years of optimization. ROCm is catching up but still has rough edges. Occasional segfaults during model loading, slower context switching, and less efficient memory management are common reports.

- Flash Attention: Flash Attention 2 support on ROCm exists but is less optimized than the CUDA version. This affects prompt processing speed more than token generation speed.

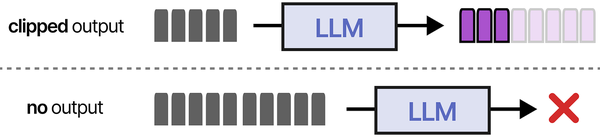

- Quantization support: Some quantization formats (specifically GGUF IQ-series quantizations) are less optimized on ROCm. Q4_K_M and Q5_K_M work well; exotic quantizations may be slower.

- Multi-GPU: Running multiple AMD GPUs for model sharding works but is less reliable than NVIDIA multi-GPU with NVLink. Peer-to-peer GPU communication over PCIe is slower and more finicky on ROCm.

- Community and troubleshooting: When something goes wrong, there are 10x more StackOverflow answers, GitHub issues, and forum posts about CUDA problems than ROCm problems. Debugging AMD-specific issues requires more self-reliance.

Troubleshooting Common ROCm Issues with Ollama

Problem: Ollama does not detect the AMD GPU

# Check 1: Is the amdgpu kernel module loaded?

lsmod | grep amdgpu

# If not loaded, try:

sudo modprobe amdgpu

# Check 2: Can the user access GPU devices?

ls -la /dev/kfd /dev/dri/render*

# Output should show group 'render' or 'video' with rw permissions

# If not, add your user (or the ollama user) to the correct groups:

sudo usermod -aG render ollama

sudo usermod -aG video ollama

# Check 3: Is ROCm installed correctly?

rocminfo 2>&1 | grep "Agent"

# Should list at least one GPU agent

# Check 4: Are the ROCm libraries in the path?

echo $LD_LIBRARY_PATH

# Should include /opt/rocm/lib

# If not:

echo 'export LD_LIBRARY_PATH=/opt/rocm/lib:$LD_LIBRARY_PATH' >> ~/.bashrc

# Check 5: For the Ollama systemd service specifically

sudo -u ollama bash -c 'rocminfo 2>&1 | head -5'

# If this fails but your user can run rocminfo, it is a permissions issueProblem: "GPU agent not supported" or HIP errors

# This usually means your GPU is not in the official support list

# Identify your GPU's GFX version

rocminfo 2>&1 | grep "Name:.*gfx"

# Set the override for your architecture:

# RDNA 3 (gfx1100, gfx1101, gfx1102):

export HSA_OVERRIDE_GFX_VERSION=11.0.0

# RDNA 2 (gfx1030, gfx1031, gfx1032):

export HSA_OVERRIDE_GFX_VERSION=10.3.0

# Test again

HSA_OVERRIDE_GFX_VERSION=11.0.0 ollama run llama3.2:1b "test"Problem: Model loads but inference is extremely slow (CPU speed)

# Ollama may be silently falling back to CPU. Check VRAM usage:

rocm-smi --showmeminfo vram

# If VRAM usage is 0 during inference, GPU offloading is not working

# Check Ollama logs for the reason:

journalctl -u ollama --since "5 minutes ago" | grep -i "gpu\|offload\|layers"

# Common cause: insufficient VRAM. Ollama loads as many layers as fit in VRAM

# and runs the rest on CPU. If your GPU has 8 GB VRAM and you're loading a model

# that needs 10 GB, most layers run on CPU.

# Force full GPU offloading (will fail if model doesn't fit):

ollama run llama3.1:8b --num-gpu 999Problem: Segmentation fault during model loading

# ROCm segfaults during model loading are often caused by:

# 1. Incompatible ROCm version with kernel version

# 2. Corrupted model files

# 3. Insufficient system RAM (ROCm needs system RAM during initialization)

# Fix 1: Verify kernel and ROCm compatibility

uname -r

cat /opt/rocm/.info/version

# Check AMD's compatibility matrix for your specific combination

# Fix 2: Re-download the model

ollama rm llama3.1:8b

ollama pull llama3.1:8b

# Fix 3: Ensure sufficient system RAM (at least 2x the model size recommended)

free -h

# Fix 4: Update ROCm to the latest point release

sudo amdgpu-install -y --usecase=rocm --accept-eulaProblem: Poor performance after kernel update

# DKMS module may not have rebuilt after a kernel update

sudo dkms status

# Look for amdgpu entries — they should show "installed" for your current kernel

# If the module is missing for your kernel:

sudo dkms autoinstall

sudo reboot

# If DKMS fails, reinstall the amdgpu driver:

sudo amdgpu-install -y --usecase=rocmOptimizing ROCm Performance for Ollama

Once the basic setup works, there are several tweaks that can improve inference speed on AMD GPUs.

Environment Variables for Performance

# Add these to /etc/environment or the Ollama systemd service override

# Disable GPU clock gating (reduces latency at cost of idle power)

GPU_FORCE_64BIT_PTR=1

# Optimize HIP runtime behavior

HIP_FORCE_DEV_KERNARG=1

# Set the number of compute units to use (usually leave at max)

# Useful if you want to reserve some CUs for display rendering

# ROC_ENABLE_PRE_VEGA=0 # Uncomment only if you have a very old fallback GPU

# For multi-GPU systems, select specific GPUs for Ollama

HIP_VISIBLE_DEVICES=0 # Use only the first GPU

# HIP_VISIBLE_DEVICES=0,1 # Use first two GPUs

# Increase GPU memory allocation limit

GPU_MAX_ALLOC_PERCENT=100

GPU_MAX_HEAP_SIZE=100Power and Clock Management

# Set the GPU power profile to compute mode

sudo sh -c 'echo compute > /sys/class/drm/card0/device/power_dpm_force_performance_level'

# Or use rocm-smi to set performance level

sudo rocm-smi --setperflevel high

# Monitor GPU clocks to ensure they are boosting during inference

watch -n 1 rocm-smi --showclocksMemory Configuration

# Increase the size of the shared memory allocation

# Useful for large models and long context windows

echo 'vm.max_map_count = 1048576' | sudo tee -a /etc/sysctl.d/99-rocm.conf

sudo sysctl -p /etc/sysctl.d/99-rocm.conf

# Disable transparent huge pages if you experience memory fragmentation

echo never | sudo tee /sys/kernel/mm/transparent_hugepage/enabledRunning Specific Models on AMD GPUs

Not all models perform equally well on AMD hardware. Based on extensive community testing, here are recommendations for different AMD GPU tiers.

8 GB VRAM (RX 7600, RX 6600 XT)

# These GPUs can run 7B-8B models at Q4 quantization

ollama run llama3.1:8b # Default Q4_K_M, ~4.9 GB VRAM

ollama run mistral:7b # ~4.4 GB VRAM

ollama run gemma2:2b # Smaller model, fast responses

ollama run phi3:mini # Microsoft's compact model16 GB VRAM (RX 7800 XT, RX 6800 XT, RX 6800)

# 13B-14B models become available

ollama run llama3.1:8b # Runs comfortably with long context

ollama run deepseek-r1:14b # Great reasoning model

ollama run qwen2.5:14b # Strong multilingual model

ollama run codellama:13b # For code generation tasks24 GB VRAM (RX 7900 XTX)

# Similar to an RTX 3090 in model capacity

ollama run qwen2.5:32b-q4 # Fits at Q4 quantization

ollama run llama3.1:8b # Runs at higher quant with full 32K context

ollama run mixtral:8x7b # Mixture of experts, ~26 GB at Q4 — tight fit

ollama run deepseek-r1:32b # Q4, excellent reasoning48+ GB VRAM (PRO W7900, MI210, MI250)

# Large models become practical

ollama run llama3.1:70b-q4 # The gold standard, needs ~42 GB

ollama run qwen2.5:72b-q4 # Comparable to GPT-4 on many tasks

ollama run mixtral:8x7b # Runs comfortably at Q5 or Q8Building a Cost-Effective AMD Inference Server

If you are building a new system specifically for local LLM inference with AMD, here is a practical parts list optimized for the RX 7900 XTX — the best consumer-tier AMD GPU for this purpose.

| Component | Recommendation | Price (USD) |

|---|---|---|

| GPU | AMD Radeon RX 7900 XTX (used or refurbished) | $450 |

| CPU | AMD Ryzen 5 5600 or Intel i5-12400 | $100 |

| Motherboard | B550 (AMD) or B660 (Intel) with PCIe 4.0 x16 | $80 |

| RAM | 32 GB DDR4-3200 (2× 16 GB) | $50 |

| Storage | 500 GB NVMe SSD | $35 |

| PSU | 750W 80+ Gold (Corsair RM750 or similar) | $80 |

| Case | Mid-tower with good airflow | $60 |

| Total | $855 |

This build gives you 24 GB VRAM, ~52 tok/s on 8B models, and enough headroom for 32B quantized models. Monthly electricity at typical usage: ~$15–20/month. Compare this to an equivalent NVIDIA build: an RTX 3090 with similar ancillary components costs roughly $750–850 total but delivers about 10% faster inference. The RX 7900 XTX build is slightly more expensive but newer hardware with better future driver support prospects.

Frequently Asked Questions

Related Articles

- Best GPU for Running LLMs Locally on Linux: 2026 Buyer's Guide

- Install NVIDIA Drivers and CUDA on Linux Server for AI: The No-Nonsense Guide (2026)

- Docker GPU Passthrough on Linux for AI Workloads

- Multi-GPU LLM Inference on Linux: Setup, Load Balancing, and Scaling

Further Reading

- Grootendorst, M. & Alammar, J. (2025). An Illustrated Guide to AI Agents. O'Reilly Media. Covers agent memory systems, RAG pipelines, tool usage, MCP protocol, and context engineering with clear visual explanations.

- Ranjan, R. et al. (2025). Agentic AI in Enterprise. Apress. Explores enterprise AI architecture, RAG vs fine-tuning trade-offs, vector databases, prompt engineering, GPU acceleration, and Kubernetes orchestration for production AI.

- Brousseau, D. & Sharp, T. (2025). LLMs in Production. Manning Publications. Covers neural network fundamentals, transformer architecture, GPU management, latency optimization, Kubernetes deployment, MLOps pipelines, embeddings, and model quantization.

Does Ollama automatically use my AMD GPU, or do I need to configure it?

If ROCm is properly installed and your GPU is detected by rocminfo, Ollama automatically uses it. No additional configuration is needed for officially supported GPUs. Ollama's startup logs (visible via journalctl -u ollama) will show a line like "inference compute library=rocm compute=gfx1100" confirming GPU detection. For unsupported GPUs that require HSA_OVERRIDE_GFX_VERSION, you need to set that environment variable either system-wide or in the Ollama systemd service override before Ollama will recognize the GPU.

Can I use an AMD GPU alongside an NVIDIA GPU in the same system for Ollama?

Technically you can have both installed, but Ollama uses only one backend (either CUDA or ROCm) per instance. It prefers CUDA if both are available. To force Ollama to use the AMD GPU, you can set HIP_VISIBLE_DEVICES=0 and unset CUDA_VISIBLE_DEVICES, or run separate Ollama instances on different ports, each configured for a different GPU. This setup is unusual and not well-tested. For simplicity, use one GPU vendor per system.

Is the RX 7900 XTX worth buying over a used RTX 3090 for Ollama specifically?

For pure Ollama inference in early 2026, the used RTX 3090 at $400–450 offers better value. It is roughly 10% faster in token generation, has better software support, and costs less. The RX 7900 XTX makes sense if you also game on the same machine (it is faster in rasterization), if you prefer AMD's open-source driver philosophy, or if you want newer hardware with longer remaining driver support. Both have 24 GB VRAM, so model compatibility is identical. If AMD GPU pricing drops below $350 used, the value proposition shifts toward AMD.

Why is my AMD GPU slower than benchmarks suggest on Ollama?

Several factors can reduce performance below published benchmarks. First, ensure your GPU clocks are actually boosting — run rocm-smi --showclocks during inference and verify the GPU clock is near maximum. Second, check that the GPU power profile is set to compute (not balanced or power-saving). Third, ensure all model layers are loaded on the GPU and none are falling back to CPU — check VRAM usage with rocm-smi. Fourth, thermal throttling: if the GPU temperature exceeds ~90-95 degrees, it reduces clocks. Improve case airflow or adjust the fan curve. Finally, system RAM bandwidth can bottleneck prompt evaluation on AMD systems if you have single-channel memory or slow DDR4.

Will future ROCm versions improve performance enough to close the gap with NVIDIA?

AMD has been steadily improving ROCm performance, and the gap has narrowed significantly from ROCm 5.x to 6.x. The remaining performance difference is primarily in software optimization, not hardware capability. The RX 7900 XTX's memory bandwidth (960 GB/s) is within 5% of the RTX 4090's (1,008 GB/s), yet the CUDA implementation extracts 10–20% more real-world token throughput. Flash Attention optimizations, better memory allocation patterns, and improved HIP kernel performance are all areas where AMD is actively investing. A reasonable expectation is that ROCm will close to within 5% of CUDA performance for inference workloads within the next 12–18 months, but CUDA will likely maintain a small lead due to its larger engineering team and longer optimization history.