If you want to run large language models on your own hardware, the GPU you pick determines everything: which models fit, how fast they generate tokens, and whether the experience is usable or painful. Finding the best GPU for running LLM locally in 2026 is not straightforward because the market is split between consumer gaming cards, used datacenter hardware, and new professional accelerators — each with different tradeoffs on Linux. This guide covers every practical tier, from a $100 used Tesla P40 to an $8,000 H100, with real performance numbers, Linux driver specifics, and complete build recommendations for nvidia linux llm setups.

The local AI hardware landscape shifted significantly in late 2025 and early 2026. Used datacenter GPUs flooded the secondary market as cloud providers refreshed their fleets. NVIDIA's open kernel modules matured to production quality. AMD's ROCm stack finally reached the point where it works reliably with Ollama and llama.cpp on supported cards. And model quantization techniques — especially Q4_K_M and Q5_K_M via GGUF — made it possible to run capable models on surprisingly modest gpu vram requirements. The result: building a local inference box that produces 30+ tokens per second on a 7B–8B parameter model can cost under $300 total. That was unthinkable two years ago.

GPU selection for local LLM work extends beyond raw VRAM capacity. As Brousseau and Sharp explain in LLMs in Production, memory bandwidth is often the true bottleneck for transformer inference because each generated token requires reading the entire model weights from memory. This is why the NVIDIA H100 with its HBM3 bandwidth of 3.35 TB/s delivers such dramatic improvements over consumer GPUs, despite the VRAM difference being more modest. For Linux administrators building local inference servers, the practical implication is that two mid-range GPUs with high memory bandwidth can outperform a single high-VRAM GPU with lower bandwidth in tokens-per-second throughput.

How GPU Choice Affects LLM Performance on Linux

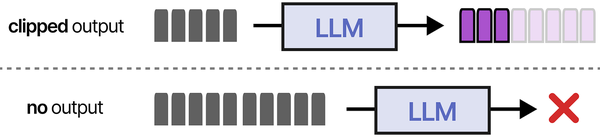

LLM inference is fundamentally a memory-bandwidth problem, not a compute problem. When a model generates tokens, it reads the entire set of model weights from VRAM for every single token produced. A 7B parameter model quantized to Q4_K_M occupies roughly 4.9 GB. Generating one token means reading all 4.9 GB from VRAM, performing relatively simple matrix-vector multiplications, then writing a small amount of data back. The GPU's compute cores sit mostly idle while waiting for memory.

Ranjan et al. discuss in Agentic AI in Enterprise that enterprise GPU selection should also account for future agentic workloads. As AI agents increasingly combine multiple models (a reasoning LLM, an embedding model, and possibly a vision model), the GPU needs to handle concurrent inference across different model architectures. The NVIDIA H100 and its successors are designed with this multi-tenant inference pattern in mind, using Multi-Instance GPU (MIG) to partition a single GPU into isolated instances. Even on consumer hardware, running Ollama with multiple models loaded simultaneously demands careful VRAM budgeting.

This is why memory bandwidth — measured in GB/s — correlates more strongly with tokens per second than CUDA core count or tensor core count. An RTX 4090 with 1,008 GB/s of memory bandwidth generates tokens roughly 2.5x faster than a Tesla P40 with 346 GB/s, even though the raw compute difference is much larger. The P40 has plenty of compute for inference; it just cannot feed data to those cores fast enough.

Three GPU specifications matter for local LLM inference, in order of importance:

- VRAM capacity — Determines which models fit entirely on the GPU. If a model does not fit, you either cannot run it or must offload layers to CPU RAM, which destroys performance.

- Memory bandwidth — Determines token generation speed. Higher bandwidth means more tokens per second.

- Memory bus width and type — GDDR6X, GDDR7, and HBM2e each have different bandwidth characteristics. HBM2e (found in datacenter cards) provides enormous bandwidth but at much higher cost.

Compute metrics like FP16 TFLOPS, tensor core generation, and CUDA core count matter far less for inference than marketing materials suggest. A V100 with 900 GB/s HBM2 bandwidth will outperform an RTX 4070 with 504 GB/s GDDR6X despite having older, less efficient compute units. Bandwidth is king.

VRAM Is Everything: Model Size vs GPU Memory

Before evaluating specific GPUs, you need to understand gpu vram requirements for the models you want to run. The relationship between parameter count, quantization level, and VRAM consumption is predictable:

VRAM Requirements by Model and Quantization

| Model | Parameters | Q4_K_M VRAM | Q5_K_M VRAM | Q8_0 VRAM | FP16 VRAM |

|---|---|---|---|---|---|

| Llama 3 8B / Llama 3.1 8B | 8B | ~4.9 GB | ~5.7 GB | ~8.5 GB | ~16 GB |

| Mistral 7B v0.3 | 7.2B | ~4.4 GB | ~5.1 GB | ~7.6 GB | ~14.4 GB |

| Gemma 2 9B | 9.2B | ~5.6 GB | ~6.5 GB | ~9.7 GB | ~18.4 GB |

| Mixtral 8x7B | 46.7B | ~26.4 GB | ~30.5 GB | ~49 GB | ~93 GB |

| Llama 3 70B / Llama 3.1 70B | 70B | ~40.6 GB | ~47.5 GB | ~74 GB | ~140 GB |

| Llama 3.1 405B | 405B | ~230 GB | ~270 GB | ~425 GB | ~810 GB |

| Qwen 2.5 32B | 32B | ~18.5 GB | ~21.5 GB | ~33.6 GB | ~64 GB |

| DeepSeek-R1 Distill 14B | 14B | ~8.1 GB | ~9.5 GB | ~14.8 GB | ~28 GB |

These numbers include KV cache overhead for a reasonable context window (4096 tokens). Longer contexts consume more VRAM. A 32K context with Llama 3 8B Q4_K_M adds roughly 1–2 GB depending on batch size and KV cache quantization settings in llama.cpp or Ollama.

The practical takeaway: 8 GB VRAM handles 7B–8B models comfortably. 16 GB handles 13B–14B models. 24 GB handles up to ~20B models at Q4 or lets you run 7B–8B at higher quantization levels with long context. 48 GB opens up Mixtral 8x7B and quantized 30B+ models. Running Llama 3 70B at Q4 requires either a single 48 GB card with tight memory management or a dual-GPU setup.

Budget Tier: Best GPUs Under $300

NVIDIA Tesla P40 — 24 GB GDDR5X (~$90–$130 used)

The Tesla P40 is the single best value proposition in local AI hardware in 2026. For roughly $100 on eBay, you get 24 GB of VRAM — the same amount as an RTX 3090 or RTX 4090. That is enough to run any 7B–13B model comfortably, Mixtral 8x7B with Q4 quantization (barely), and many 20B+ models.

The P40's weakness is memory bandwidth: 346 GB/s from its 384-bit GDDR5X bus. This makes it roughly 3x slower at token generation than an RTX 4090. In practice, you get around 22–28 tokens per second on Llama 3 8B Q4_K_M, which is perfectly usable for interactive chat. For a 13B model, expect 12–16 tok/s. These are fine for personal use. They are not fine for serving multiple concurrent users.

Linux driver support: The P40 is supported by NVIDIA's proprietary driver from version 390 through the latest 570 series. It works with CUDA 11.x and 12.x. Ollama, llama.cpp, and vLLM all detect and use it without issues. The P40 does not support NVIDIA's open kernel modules (nvidia-open) — it requires the proprietary blob. On Fedora 41/42, Ubuntu 24.04/24.10, and RHEL 9, driver installation is straightforward via RPM Fusion, the Ubuntu NVIDIA PPA, or NVIDIA's .run installer.

Power draw: 250W TDP. This is significant — the P40 is a passive-cooled datacenter card, so you need a case with strong front-to-back airflow or an aftermarket GPU cooler. Many users buy a $20–$30 Arctic Accelero III or similar tower cooler and adapt it. Without active cooling in a desktop case, the P40 will thermal throttle.

Key limitation: No video output. The P40 is a compute-only card. You need either integrated graphics on your CPU, a separate cheap GPU for display, or a headless setup.

NVIDIA Tesla P100 — 16 GB HBM2 (~$80–$120 used)

The P100 is an interesting alternative to the P40. It has only 16 GB of VRAM (limiting model size), but its HBM2 memory provides 732 GB/s bandwidth — more than double the P40. For models that fit in 16 GB, the P100 generates tokens noticeably faster. Llama 3 8B Q4_K_M runs at around 38–45 tok/s on a P100 versus 22–28 on a P40.

The tradeoff is clear: the P100 is faster but cannot run the larger models that the P40 handles. If you primarily want to run 7B–8B models at good speed, the P100 is excellent. If you want to run 13B+ models or experiment with Mixtral, the P40's extra 8 GB of VRAM matters more than the P100's bandwidth advantage.

Linux driver support: Same as P40 — full CUDA 11/12 support, proprietary driver only.

Power draw: 250W. Same cooling requirements as the P40.

NVIDIA RTX 3060 12 GB (~$180–$220 used)

The RTX 3060 with 12 GB GDDR6 is the cheapest consumer card with enough VRAM to comfortably run 7B–8B models. Memory bandwidth is 360 GB/s — comparable to the P40 — so token generation speed is similar. The advantage over the P40 is that the RTX 3060 has display output, active cooling from the factory, and support for NVIDIA's open kernel modules.

The disadvantage is only 12 GB of VRAM. You can run 7B–8B models at Q4/Q5 quantization with room for context, but 13B models require aggressive quantization (Q3 or IQ3), and anything larger will not fit. At this price point, the P40 offers better value for inference workloads.

Mid-Range: $300–$800 GPUs for Serious Local AI

NVIDIA RTX 3090 — 24 GB GDDR6X (~$380–$500 used)

The RTX 3090 hits a sweet spot in 2026. Prices have dropped substantially as miners and gamers upgraded to 40-series and 50-series cards. You get 24 GB of GDDR6X with 936 GB/s bandwidth — nearly 3x the P40's bandwidth at the same VRAM capacity. Llama 3 8B Q4_K_M runs at 55–65 tok/s. That is genuinely fast and makes interactive use feel responsive even with complex system prompts.

The RTX 3090 runs 13B models at 30–38 tok/s and can handle quantized versions of 20B models. Mixtral 8x7B at Q4 barely fits with minimal context window. For most users who want a single card that handles a wide range of models at good speed, the used RTX 3090 is the practical choice in 2026.

Linux driver support: Ampere architecture, supported by drivers 470+ and CUDA 11.x/12.x. Works with nvidia-open kernel modules from driver 530+. All major inference engines (Ollama, llama.cpp, vLLM, TGI) work without issues.

Power draw: 350W TDP. You need a 750W+ PSU. This is the card's biggest operational cost — it is power-hungry even during inference, typically pulling 200–280W under sustained LLM workloads.

NVIDIA A100 40 GB (PCIe) — 40 GB HBM2e (~$500–$700 used)

Used A100 40 GB PCIe cards entered the secondary market in large volumes during late 2025 as hyperscalers decommissioned first-generation A100 clusters. At $500–$700, this is extraordinary value. You get 40 GB HBM2e with 1,555 GB/s bandwidth — the fastest memory subsystem available under $1,000.

The A100 40 GB runs Llama 3 8B Q4_K_M at 80–95 tok/s. It comfortably fits Mixtral 8x7B at Q4 with its 40 GB of VRAM. Llama 3 70B at Q4 does not fit (needs ~41 GB with KV cache), but IQ3_XS quantization at ~33 GB does work with reduced quality. For mid-range budgets focused on throughput and model variety, the used A100 40 GB is the most capable option per dollar.

Linux driver support: Full CUDA 11/12 support. Works with nvidia-open modules. MIG (Multi-Instance GPU) is available, letting you partition the card for multiple isolated workloads — useful if you want to serve different models simultaneously.

Power draw: 250W TDP. Surprisingly efficient given its performance. Requires PCIe 4.0 x16 slot and a server-grade or workstation PSU with the appropriate power connector (dual 8-pin).

Key limitation: No display output (datacenter card). Also physically a dual-slot full-length card that requires good airflow. SXM4 variants are cheaper but need a compatible baseboard — avoid SXM4 unless you have the right chassis.

NVIDIA RTX A6000 / RTX 6000 Ada — 48 GB (~$500–$800 used for A6000)

The RTX A6000 (Ampere, 48 GB GDDR6, 768 GB/s) is available used for $500–$800 in 2026. The 48 GB VRAM is its defining feature — enough for Mixtral 8x7B at Q4/Q5, Llama 3 70B at Q3/IQ3, Qwen 2.5 32B at Q4 with room for context, and comfortably any model under 30B at high quantization. Bandwidth is moderate at 768 GB/s, so token speed on smaller models is between an RTX 3090 and A100.

The newer RTX 6000 Ada (48 GB GDDR6, 960 GB/s) is faster but still commands $2,500+ used, putting it outside this tier.

High-End: $800+ GPUs for Production Inference

NVIDIA RTX 4090 — 24 GB GDDR6X (~$900–$1,100 used, ~$1,400 new)

The RTX 4090 is the fastest consumer card for LLM inference. Its 1,008 GB/s memory bandwidth and efficient Ada Lovelace architecture produce 70–85 tok/s on Llama 3 8B Q4_K_M. For models that fit in 24 GB, nothing consumer-grade is faster.

The limitation is 24 GB. The same VRAM ceiling as the RTX 3090 means the 4090 cannot run larger models that cheaper 40 GB or 48 GB datacenter cards handle. If your workflow centers on 7B–14B models and you want maximum speed, the 4090 is the best consumer option. If you need to run 30B+ models, the A100 40 GB or A6000 at lower cost makes more sense.

Linux driver support: Ada Lovelace, supported by drivers 525+ and CUDA 12.x. Full nvidia-open support. Flash Attention 2 support in all major inference frameworks.

Power draw: 450W TDP. Requires a 850W+ PSU and a case with adequate airflow for the massive cooler.

NVIDIA A100 80 GB (PCIe/SXM4) — 80 GB HBM2e (~$2,500–$4,000 used)

The A100 80 GB is the practical sweet spot for running large models without compromise. 80 GB HBM2e fits Llama 3 70B at Q4_K_M comfortably (~41 GB), leaving room for 32K+ context windows. Mixtral 8x7B runs at Q5 or Q8 quantization. Memory bandwidth is 2,039 GB/s on the SXM4 variant (1,555 GB/s PCIe), delivering excellent token throughput even on 70B models.

Llama 3 70B Q4_K_M generates roughly 15–20 tok/s on an A100 80 GB PCIe — fast enough for comfortable interactive use. On the SXM4 variant, expect 18–24 tok/s for the same model. Smaller models like Llama 3 8B exceed 100 tok/s.

Linux driver support: Identical to A100 40 GB. MIG support allows partitioning into up to 7 instances.

Power draw: 300W (PCIe) or 400W (SXM4). The SXM4 variant requires an HGX baseboard or compatible server chassis — it is not a drop-in PCIe card.

NVIDIA L40S — 48 GB GDDR6 with ECC (~$3,000–$5,000 used)

The L40S is Ada Lovelace-based with 48 GB GDDR6 and 864 GB/s bandwidth. It combines the 4090's modern architecture with double the VRAM. For 30B–46B models, it is one of the best single-card options. Token speed is competitive with the A100 40 GB on models that fit in 40 GB, and it runs larger models that the A100 40 GB cannot.

The L40S is a better choice than the A100 40 GB for users who need to run models in the 35–48 GB range, but the A100 80 GB remains superior for 70B models due to higher HBM bandwidth.

NVIDIA H100 (PCIe/SXM5) — 80 GB HBM3 (~$8,000–$14,000 used)

The H100 is the fastest single GPU for LLM inference available. 80 GB HBM3 at 3,350 GB/s (SXM5) or 2,000 GB/s (PCIe) bandwidth. Llama 3 70B Q4 runs at 25–35 tok/s. FP8 inference support enables running models at 8-bit precision natively without GGUF quantization, preserving quality while reducing memory footprint.

At its price point, the H100 only makes sense for production serving where token throughput directly translates to revenue, or for organizations that need to run 70B+ models at speeds suitable for real-time applications. For personal or small-team use, the A100 80 GB offers 60–70% of the performance at 30–40% of the cost.

GPU Benchmark Comparison Table

All benchmarks measured with Ollama 0.6.x on Ubuntu 24.04 LTS, NVIDIA driver 570, CUDA 12.8. Models loaded from GGUF format at Q4_K_M quantization unless noted. Numbers represent sustained token generation speed (tokens/second) after prompt processing.

| GPU | VRAM | BW (GB/s) | Llama 3 8B | Mistral 7B | Mixtral 8x7B | Llama 3 70B | Price (2026) |

|---|---|---|---|---|---|---|---|

| Tesla P40 | 24 GB | 346 | 25 t/s | 28 t/s | 5.2 t/s | CPU offload* | $90–$130 |

| Tesla P100 | 16 GB | 732 | 42 t/s | 46 t/s | OOM | OOM | $80–$120 |

| RTX 3060 12GB | 12 GB | 360 | 26 t/s | 29 t/s | OOM | OOM | $180–$220 |

| RTX 3090 | 24 GB | 936 | 62 t/s | 68 t/s | 10 t/s | CPU offload* | $380–$500 |

| A100 40 GB | 40 GB | 1,555 | 88 t/s | 95 t/s | 22 t/s | IQ3 only: 8 t/s | $500–$700 |

| RTX A6000 | 48 GB | 768 | 50 t/s | 55 t/s | 14 t/s | IQ3: 5 t/s | $500–$800 |

| RTX 4090 | 24 GB | 1,008 | 78 t/s | 85 t/s | 12 t/s | CPU offload* | $900–$1,400 |

| A100 80 GB | 80 GB | 1,555 | 88 t/s | 95 t/s | 25 t/s | 18 t/s | $2,500–$4,000 |

| L40S | 48 GB | 864 | 58 t/s | 63 t/s | 16 t/s | IQ3: 6 t/s | $3,000–$5,000 |

| H100 PCIe | 80 GB | 2,000 | 115 t/s | 125 t/s | 32 t/s | 24 t/s | $8,000–$14,000 |

* "CPU offload" means the model's full Q4_K_M quantization does not fit in VRAM. Ollama can offload remaining layers to system RAM, but speed drops to 2–4 tok/s depending on system memory bandwidth. Practically unusable for interactive use at 70B scale on 24 GB cards.

OOM = Out of Memory. Model does not fit even with maximum quantization.

NVIDIA vs AMD: Linux Driver and Compatibility Reality

The cheap gpu for ai conversation in 2026 inevitably includes AMD, because Radeon cards offer competitive VRAM per dollar. The RX 7900 XTX has 24 GB GDDR6 for around $700 new. The question is whether the software stack on Linux is ready.

NVIDIA on Linux: The Mature Path

NVIDIA dominates local LLM inference on Linux for practical reasons:

- Driver quality: The proprietary NVIDIA driver is mature and reliable. Version 570 (current as of early 2026) supports every GeForce, Quadro, Tesla, and datacenter GPU from Kepler onward. Installation on major distributions is a solved problem —

sudo apt install nvidia-driver-570on Ubuntu,sudo dnf install akmod-nvidiaon Fedora via RPM Fusion. - Open kernel modules: Since driver 530, NVIDIA ships open-source kernel modules (

nvidia-open) that support Turing, Ampere, Ada Lovelace, and Hopper GPUs. These solve the kernel ABI compatibility headaches that plagued proprietary NVIDIA modules for decades. On newer GPUs, nvidia-open is now the default and recommended path. Note: Pascal-era cards (P40, P100) still require the proprietary modules. - CUDA ecosystem: Ollama, llama.cpp, vLLM, text-generation-inference, and every other serious LLM inference engine targets CUDA first. CUDA 12.8 is current, with broad compatibility back to CUDA 11.8 for older GPUs. When a new model architecture or inference optimization lands, CUDA support comes first and is always the most stable.

- Container support: The NVIDIA Container Toolkit integrates with Docker and Podman. Running

docker run --gpus allworks reliably. The nvidia-container-runtime handles device node creation, driver library mounting, and CUDA version compatibility inside containers. This is critical for production deployments where you want reproducible environments.

To install the NVIDIA runtime for Docker on a systemd-based Linux distribution:

# Add the NVIDIA container toolkit repository

curl -fsSL https://nvidia.github.io/libnvidia-container/gpgkey | \

sudo gpg --dearmor -o /usr/share/keyrings/nvidia-container-toolkit-keyring.gpg

curl -s -L https://nvidia.github.io/libnvidia-container/stable/deb/nvidia-container-toolkit.list | \

sed 's#deb https://#deb [signed-by=/usr/share/keyrings/nvidia-container-toolkit-keyring.gpg] https://#g' | \

sudo tee /etc/apt/sources.list.d/nvidia-container-toolkit.list

sudo apt update && sudo apt install -y nvidia-container-toolkit

sudo nvidia-ctk runtime configure --runtime=docker

sudo systemctl restart docker

# Verify

docker run --rm --gpus all nvidia/cuda:12.8.0-base-ubuntu24.04 nvidia-smiAMD ROCm on Linux: Progress With Caveats

AMD's ROCm (Radeon Open Compute) stack has improved substantially. ROCm 6.4 (current in early 2026) supports the following GPUs for LLM inference via llama.cpp and Ollama:

- Officially supported: Radeon RX 7900 XTX, RX 7900 XT, RX 7900 GRE, Radeon PRO W7900, Instinct MI210, MI250, MI300X

- Community-supported (works with overrides): RX 7800 XT, RX 7700 XT, RX 6900 XT, RX 6800 XT

- Not supported: RX 7600, RX 6700 XT and lower — these lack the necessary compute capabilities or ROCm compatibility

The practical experience with ROCm in 2026:

- Installation is harder. ROCm requires specific kernel versions, has complex dependency chains, and distribution support is narrower than NVIDIA's driver. Ubuntu 22.04 and 24.04 are the primary supported platforms. Fedora support is community-maintained and fragile.

- Performance is 10–25% lower than NVIDIA at equivalent memory bandwidth, because CUDA inference kernels in llama.cpp and Ollama are more optimized than their HIP/ROCm equivalents. The gap is narrowing but remains.

- Compatibility issues persist. Some model architectures have bugs or missing optimizations in the ROCm codepath. MoE (Mixture of Experts) models like Mixtral have historically been problematic on AMD.

- Docker GPU passthrough works but requires the

--device /dev/kfd --device /dev/driflags plus the--group-add videoflag. It is less polished than NVIDIA's--gpus allapproach.

The bottom line: if you are building a dedicated local AI inference machine, buy NVIDIA. The software ecosystem is mature, the community support is deeper, and you will spend less time debugging driver issues. AMD is a reasonable choice only if you already own a high-end Radeon card and want to experiment, or if your budget absolutely cannot stretch to an equivalent NVIDIA option.

Intel Arc: Not Ready

Intel Arc GPUs (A770, A750) have SYCL/oneAPI support in llama.cpp, but performance is poor compared to NVIDIA and AMD at equivalent prices, driver support on Linux is inconsistent, and the ecosystem is immature. Avoid for LLM workloads in 2026.

Multi-GPU Inference on Linux

When a model does not fit on a single GPU, you can split it across multiple GPUs. This is called tensor parallelism (splitting individual layers across GPUs) or pipeline parallelism (assigning different layers to different GPUs). The practical considerations on Linux differ significantly depending on your interconnect.

NVLink vs PCIe: The Interconnect Problem

Multi-GPU inference requires constant communication between GPUs. During pipeline-parallel inference, GPUs pass activation tensors between layers. During tensor-parallel inference, GPUs exchange partial results within each layer. The speed of this communication directly affects token generation speed.

- PCIe 4.0 x16: ~32 GB/s bidirectional. This is what consumer motherboards and most workstations provide. Two RTX 3090s on PCIe will lose 15–30% of theoretical throughput to inter-GPU communication overhead, depending on model architecture and parallelism strategy.

- NVLink (A100/H100): 600 GB/s (A100 3rd gen NVLink) or 900 GB/s (H100 4th gen NVLink). This nearly eliminates the communication bottleneck, making multi-GPU scaling close to linear. However, NVLink requires NVLink bridges (consumer) or NVSwitch/HGX baseboards (datacenter).

Practical Multi-GPU Configurations

Dual RTX 3090 (PCIe): Combined 48 GB VRAM. Runs Llama 3 70B at Q4_K_M with pipeline parallelism in llama.cpp. Expect 10–14 tok/s — slower than a single A100 80 GB despite having more combined bandwidth, because PCIe inter-GPU latency adds overhead on every token. Cost: $760–$1,000 for two used cards. A used A100 80 GB at $2,500 is faster and simpler, but dual 3090s are a budget alternative.

Dual P40 (PCIe): Combined 48 GB VRAM. Can run Llama 3 70B at aggressive quantization (IQ3_XS). Speed is poor — 3–5 tok/s — because you are combining low bandwidth with PCIe overhead. Barely interactive. Cost: $180–$260 for two cards. This is the absolute cheapest way to "run" a 70B model on GPU, but the experience is marginal.

Dual A100 80 GB (NVLink): Combined 160 GB VRAM with 600 GB/s NVLink. Runs Llama 3 70B at FP16 (no quantization) at 20–28 tok/s. Runs Llama 3.1 405B at Q4. This is production-grade hardware. Cost: $5,000–$8,000 for two cards plus an NVLink bridge. Requires a server chassis or workstation that supports dual-width datacenter GPUs.

Setting Up Multi-GPU in Ollama and llama.cpp

Ollama automatically detects multiple NVIDIA GPUs and distributes model layers across them. No configuration is needed — it uses pipeline parallelism by default. You can control layer distribution with the OLLAMA_GPU_LAYERS environment variable or by setting num_gpu in the Modelfile.

In llama.cpp's llama-server, use the --tensor-split flag to control how VRAM is distributed:

# Split 60% to GPU 0, 40% to GPU 1

./llama-server -m model.gguf --tensor-split 0.6,0.4 -ngl 99

# Verify GPU usage

nvidia-smi --query-gpu=index,memory.used,memory.total --format=csvFor vLLM, tensor parallelism is set at launch:

python -m vllm.entrypoints.openai.api_server \

--model meta-llama/Llama-3-70b \

--tensor-parallel-size 2 \

--gpu-memory-utilization 0.92Complete Budget Build: Used Workstation + Tesla P40

This is a complete local ai hardware build specification for running 7B–13B LLMs at interactive speeds on Linux, optimized for minimum cost.

Parts List

| Component | Specification | Price (used/new) | Notes |

|---|---|---|---|

| Workstation | Dell Precision T7810 or HP Z640 | $120–$180 | Dual CPU sockets, 750W PSU, PCIe 3.0 x16 |

| CPU | Xeon E5-2680 v4 (14C/28T) | $15–$25 | Included with workstation or cheap on eBay |

| RAM | 64 GB DDR4 ECC (4x16 GB) | $40–$60 | For model loading and KV cache overflow |

| GPU | NVIDIA Tesla P40 24 GB | $90–$130 | Add aftermarket cooler ($20–$30) |

| GPU Cooler | Arctic Accelero III or 3D-printed shroud + 92mm fans | $20–$30 | Essential — P40 is passively cooled |

| Boot Drive | 256 GB NVMe (PCIe adapter if needed) | $20–$30 | For OS. Models stored on secondary drive. |

| Model Storage | 1 TB SATA SSD | $40–$55 | Stores GGUF model files. Loading speed is fine on SATA. |

Total cost: $345–$510

Operating System Setup

Install Ubuntu 24.04 LTS Server (minimal) or Fedora 41 Server. Both have excellent NVIDIA driver support. For the P40 specifically:

# Ubuntu 24.04

sudo apt update && sudo apt upgrade -y

sudo apt install -y linux-headers-$(uname -r) build-essential

sudo apt install -y nvidia-driver-570

sudo reboot

# Verify GPU detection

nvidia-smi

# Install Ollama

curl -fsSL https://ollama.com/install.sh | sh

# Pull and run a model

ollama pull llama3:8b

ollama run llama3:8bThermal Management

The P40 has no fan. In a workstation with decent airflow (the T7810 and Z640 both have good front-to-back airflow from factory), the P40 will run at 75–85°C under sustained inference load with an aftermarket cooler. Monitor temperatures with:

# Real-time GPU temperature monitoring

watch -n 1 nvidia-smi --query-gpu=temperature.gpu,power.draw,utilization.gpu --format=csv,noheaderIf temperatures exceed 90°C, add case fans or improve the GPU cooler mounting. The P40 throttles at 94°C and shuts down at 97°C.

Performance Expectations

With this build, expect the following real-world performance in Ollama:

- Llama 3 8B (Q4_K_M): 22–28 tok/s generation, 2–3 second prompt processing for typical prompts

- Mistral 7B (Q4_K_M): 25–31 tok/s generation

- DeepSeek-R1 Distill 14B (Q4_K_M): 12–16 tok/s generation — usable but noticeably slower

- Qwen 2.5 14B (Q4_K_M): 11–15 tok/s generation

- CodeLlama 13B (Q4_K_M): 13–17 tok/s generation

These speeds are fast enough for personal chat, coding assistance, document summarization, and RAG pipelines with moderate throughput. They are not fast enough for multi-user serving or latency-sensitive production APIs.

Upgrade Path

The beauty of starting with a used workstation is the upgrade path. The T7810 and Z640 both have a second PCIe x16 slot. You can add a second P40 for $100 to get 48 GB combined VRAM and run Mixtral 8x7B or quantized 30B+ models. Or replace the P40 with a used RTX 3090 when you want 3x the token speed. The workstation chassis, PSU, and RAM carry forward through multiple GPU upgrades.

Related Articles

- Install NVIDIA Drivers and CUDA on Linux Server for AI: The No-Nonsense Guide (2026)

- NVIDIA Tesla P40 and Ollama: Budget LLM Server Build Guide for Linux

- Multi-GPU LLM Inference on Linux: Setup, Load Balancing, and Scaling

- Power Consumption: Running LLMs 24/7 on Linux — Real Electricity Costs

Further Reading

- Grootendorst, M. & Alammar, J. (2025). An Illustrated Guide to AI Agents. O'Reilly Media. Covers agent memory systems, RAG pipelines, tool usage, MCP protocol, and context engineering with clear visual explanations.

- Ranjan, R. et al. (2025). Agentic AI in Enterprise. Apress. Explores enterprise AI architecture, RAG vs fine-tuning trade-offs, vector databases, prompt engineering, GPU acceleration, and Kubernetes orchestration for production AI.

- Brousseau, D. & Sharp, T. (2025). LLMs in Production. Manning Publications. Covers neural network fundamentals, transformer architecture, GPU management, latency optimization, Kubernetes deployment, MLOps pipelines, embeddings, and model quantization.

FAQ

Can I run LLMs on integrated graphics or without a GPU?

You can run LLMs on CPU-only using llama.cpp or Ollama — they will automatically use CPU when no supported GPU is detected. Performance depends on system RAM bandwidth. On a modern DDR5 system, expect 5–10 tok/s for a 7B model at Q4. On DDR4, expect 3–6 tok/s. It works for experimentation but is too slow for regular use. Integrated graphics (Intel UHD, AMD APU) do not meaningfully accelerate inference. A $100 Tesla P40 gives you 4–5x the speed of CPU-only on a DDR4 system.

How much VRAM do I need for Llama 3 70B?

At Q4_K_M quantization (the standard quality/size tradeoff), Llama 3 70B requires approximately 40.6 GB of VRAM plus 1–3 GB for KV cache depending on context length. A single 48 GB card (A6000, L40S) runs it with a short context window. An 80 GB card (A100 80 GB, H100) runs it comfortably with 32K+ context. Dual 24 GB cards (two RTX 3090s) can run it via pipeline parallelism but with PCIe overhead reducing speed. The cheapest reliable option for 70B in 2026 is a used A100 80 GB at around $2,500–$3,000.

Is the RTX 4090 worth it over the RTX 3090 for LLM inference?

If you are only doing LLM inference (not training, not gaming), the RTX 4090 is roughly 25–30% faster than the RTX 3090 at token generation for models that fit in 24 GB. At current used prices ($900–$1,100 for a 4090 vs $380–$500 for a 3090), the 3090 offers substantially better performance per dollar. The 4090 makes sense if you also game or do creative work and want one card for everything, or if maximum single-GPU speed on 7B–13B models is your priority. For pure inference value, the used RTX 3090 or the used A100 40 GB are better buys.

Should I buy NVIDIA or AMD for local LLMs on Linux?

Buy NVIDIA. The ROCm software stack on Linux has improved dramatically but still requires more setup effort, has fewer optimized inference kernels, and supports a narrower range of GPU models. Every major LLM framework targets CUDA as the primary backend. Community support, troubleshooting resources, and documentation overwhelmingly favor NVIDIA. The only scenario where AMD makes sense is if you already own a supported Radeon card (RX 7900 XTX/XT) and want to avoid buying new hardware. Even then, expect to spend more time on driver configuration and occasional compatibility issues.

Can I use multiple different GPU models together for inference?

Yes, with caveats. llama.cpp supports heterogeneous multi-GPU setups — you can pair, say, a P40 and an RTX 3090 and assign layers to each via --tensor-split. Ollama handles this automatically. The limiting factor is that inference speed is bottlenecked by the slowest GPU in the pipeline. If you pair a fast card with a slow card, the slow card determines your overall token rate. In practice, pairing identical or similar-speed GPUs gives the best results. Mixing an RTX 3090 with a P40 means your RTX 3090 sits idle waiting for the P40 to finish its layers. It still works and gives you more VRAM, but you do not get the full benefit of the faster card's bandwidth.