The NVIDIA Tesla P40 is the most compelling piece of used datacenter hardware you can buy for local AI inference in 2026. For around $100 on eBay, you get a GPU with 24 GB of VRAM — the same capacity as an RTX 3090 that costs four times more. The catch? It is a passive-cooled server card with no display output, needs specific driver versions, and draws 250 watts. None of these are deal-breakers. This guide walks through building a complete nvidia p40 ollama linux server from parts list to first inference, with every practical detail covered: cooling, power, drivers, benchmarks, and which models actually run well on this hardware.

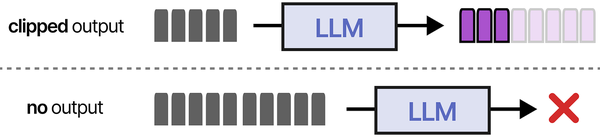

The Tesla P40's 24 GB of VRAM makes it a surprisingly capable platform for local LLM work. Brousseau and Sharp explain in LLMs in Production that the primary bottleneck for transformer inference is memory bandwidth, not compute. The P40's 346 GB/s bandwidth is lower than modern GPUs, which means tokens-per-second will be slower, but the generous VRAM allows loading larger models (or more heavily quantized versions of the largest models) than consumer cards with only 8-12 GB. Their cost-efficiency analysis shows that used enterprise GPUs like the P40 deliver the lowest cost-per-token for workloads that do not require real-time response latency.

The P40 exists in a sweet spot that no modern consumer GPU can match at its price. Modern budget GPUs like the RTX 4060 offer only 8 GB of VRAM, which limits you to 7B models. The P40's 24 GB opens the door to 13B–14B models comfortably, quantized versions of 20B–32B models, and even Mixtral 8x7B if you squeeze. For personal use, homelab experimentation, or small team deployments where token generation speed is less critical than model variety, nothing else comes close on a per-dollar basis.

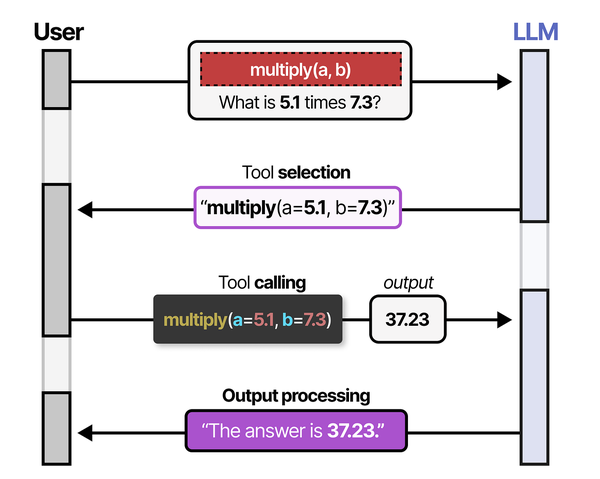

Ranjan et al. note in Agentic AI in Enterprise that budget LLM servers built on used enterprise GPUs are an excellent way for organizations to prototype AI applications before committing to expensive infrastructure. A P40-based server running Ollama can host a private chatbot, an embedding pipeline for RAG, and a code-assistance model simultaneously, allowing teams to validate use cases before scaling to modern GPUs like the L40S or H100.

Why the Tesla P40 for Local LLMs

The Tesla P40 was released in 2016 as a datacenter inference and virtualization accelerator. It uses the Pascal architecture (GP102 chip) with 3,840 CUDA cores and 24 GB of GDDR5X memory on a 384-bit bus, providing 346 GB/s memory bandwidth. Major cloud providers deployed thousands of them, and as those providers upgraded to Volta (V100) and Ampere (A100) hardware, the P40s hit the secondary market in massive quantities. Basic supply and demand did the rest: lots of supply, limited demand from gamers (no display output), prices cratered.

P40 Specifications at a Glance

| Specification | Value |

|---|---|

| GPU Architecture | Pascal (GP102) |

| CUDA Cores | 3,840 |

| VRAM | 24 GB GDDR5X |

| Memory Bus Width | 384-bit |

| Memory Bandwidth | 346 GB/s |

| TDP | 250W |

| Cooling | Passive (no fan) |

| Display Output | None |

| PCIe Interface | PCIe 3.0 x16 |

| FP32 Performance | 12 TFLOPS |

| FP16 Performance | No native FP16 (emulated) |

| INT8 Performance | 47 TOPS |

| Power Connectors | 1× 8-pin + 1× 6-pin (or 2× 8-pin) |

| Form Factor | Full-height, dual-slot, 267mm long |

The lack of native FP16 support is worth noting. Newer GPUs (Volta and later) have dedicated FP16 tensor cores that accelerate half-precision math by 2–4x. The P40 runs FP16 operations at FP32 speed because it lacks those units. For GGUF quantized models (Q4, Q5, Q8) this barely matters because llama.cpp uses integer and mixed-precision operations that the P40 handles fine. It does mean the P40 is a poor choice for training or running models in full FP16/BF16 precision — but that is not what you buy a $100 GPU for.

Complete Parts List and Pricing

Building a P40 inference server is about finding the cheapest possible platform that does not bottleneck the GPU. The P40 needs a PCIe 3.0 x16 slot, an adequate PSU, and a cooling solution. Everything else is secondary.

Option A: Used Server Build (~$280–$350)

| Component | Recommendation | Price (USD) | Where to Buy |

|---|---|---|---|

| GPU | NVIDIA Tesla P40 24 GB | $90–$130 | eBay, AliExpress |

| Host System | Dell PowerEdge T620 or HP ProLiant ML350p (used) | $80–$120 | eBay, IT resellers |

| RAM | 32 GB DDR3 ECC (usually included with server) | $0–$30 | Included or eBay |

| Boot Drive | 240–500 GB SATA SSD | $20–$35 | Amazon |

| Model Storage | 1 TB SATA SSD (optional, for many models) | $45 | Amazon |

| GPU Cooling | Not needed — server chassis has built-in fans | $0 | N/A |

| Total | $280–$360 |

The used tower server approach is ideal because these machines already have the high-wattage PSU, adequate airflow, and PCIe slots that the P40 needs. The T620 commonly comes with dual Xeon E5-2600 v2 series CPUs, 32–64 GB RAM, and a 750W+ redundant PSU. You plug in the P40, install an SSD, and you have a working inference server.

The downside of used servers: they are loud. Tower servers use high-RPM fans designed for datacenter environments. If this machine lives in your closet or garage, the noise is fine. If it sits in your office, you will hear it.

Option B: Desktop Build (~$320–$400)

| Component | Recommendation | Price (USD) | Where to Buy |

|---|---|---|---|

| GPU | NVIDIA Tesla P40 24 GB | $90–$130 | eBay, AliExpress |

| CPU | Intel i3-12100 or AMD Ryzen 3 3100 | $60–$80 | Amazon, eBay |

| Motherboard | B660 (Intel) or B450 (AMD) with PCIe x16 | $55–$75 | Amazon |

| RAM | 16–32 GB DDR4 | $25–$45 | Amazon |

| Boot Drive | 500 GB NVMe SSD | $30 | Amazon |

| PSU | 650W 80+ Bronze or better | $50–$65 | Amazon |

| Case | Mid-tower with strong front-to-back airflow | $40–$55 | Amazon |

| GPU Cooling | Aftermarket cooler (see section below) | $25–$45 | Amazon, AliExpress |

| Total | $375–$525 |

The desktop build costs slightly more but gives you a quieter system with modern components. The CPU does not matter much for inference — even a budget quad-core handles the CPU-side work (tokenization, context management) without bottlenecking. The PCIe 3.0 vs 4.0 slot difference is irrelevant for the P40 since it is a PCIe 3.0 card.

Cooling Solutions for the Tesla P40

This is the single most important practical consideration. The P40 is a passive-cooled card designed for server chassis with powerful front-to-back airflow. In a desktop case without directed airflow over the GPU heatsink, it will thermal throttle within minutes and eventually shut down to protect itself.

Option 1: Aftermarket GPU Cooler

The most reliable desktop solution is replacing the P40's passive heatsink with an aftermarket tower cooler designed for GPUs.

# Popular cooler choices for the P40:

# - Arctic Accelero Xtreme III or IV (~$35-$45)

# Fits well, excellent cooling, requires 3-slot clearance

# - Raijintek Morpheus II (~$40-$50)

# Better build quality, universal GPU bracket

# - ID-COOLING SE-234 GPU adapter + 120mm fan (~$25)

# Budget option, good enough for sustained loads

# After installing a cooler, verify GPU temperature under load:

nvidia-smi --query-gpu=temperature.gpu --format=csv -l 1

# Target: under 80°C under sustained inference load

# Ideal: 65-75°C range for longevityInstalling an aftermarket cooler on the P40 typically involves removing the original heatsink (8–12 screws), cleaning the GPU die, applying thermal paste, and mounting the new cooler with the provided bracket and thermal pads for VRAM chips. The process takes 30–60 minutes. There are numerous YouTube videos showing the exact steps for the P40 specifically.

Option 2: Case Fan Blowdown Method

If you do not want to modify the GPU, you can direct airflow from case fans onto the P40's stock heatsink. This requires a case with fan mount positions above or beside the GPU slot.

# Mounting approach:

# - Install 2× 120mm or 2× 140mm fans above the GPU, pointing down

# - Use zip ties or fan brackets to position them directly over the P40 heatsink

# - Set fan speed to 100% or use a PWM fan controller

# This approach keeps temperatures under 85°C at moderate loads

# but may not prevent throttling during extended continuous inference

# Works best in cases with mesh top panels (e.g., Meshify C, Lancool 215)Option 3: Server Chassis (No Modification Needed)

If you chose the used server route (Option A in the parts list), the chassis fans provide exactly the airflow the P40 was designed for. Just install the card in the PCIe slot and the server's fan controller handles cooling automatically. GPU temperatures typically stay under 70°C even at sustained full load.

NVIDIA Driver Installation for the Tesla P40 on Linux

The P40 uses the Pascal architecture, which is supported by a wide range of NVIDIA driver versions. In 2026, you have two main choices: the latest production driver (560+ series) or the 535 long-lived branch. Both work well.

Ubuntu 22.04 / 24.04

# Option 1: Ubuntu's packaged NVIDIA driver (easiest)

sudo apt update

sudo apt install -y nvidia-driver-560 nvidia-utils-560

# Option 2: NVIDIA's official PPA (sometimes newer)

sudo add-apt-repository ppa:graphics-drivers/ppa

sudo apt update

sudo apt install -y nvidia-driver-560

# Reboot after installation

sudo reboot

# Verify driver installation

nvidia-smi

# Expected output:

# +-----------------------------------------------------------------------------------------+

# | NVIDIA-SMI 560.xx.xx Driver Version: 560.xx.xx CUDA Version: 12.x |

# | GPU Name Persistence-M | Bus-Id Disp.A | Volatile Uncorr. ECC |

# | Tesla P40 Off | 00000000:03:00.0 Off | 0 |

# | 0% 35C P8 10W / 250W | 0MiB / 24576MiB | 0% |

# +-----------------------------------------------------------------------------------------+RHEL 9 / Rocky Linux 9 / AlmaLinux 9

# Install prerequisites

sudo dnf install -y kernel-devel-$(uname -r) kernel-headers-$(uname -r) gcc make dkms

# Add NVIDIA repository

sudo dnf config-manager --add-repo https://developer.download.nvidia.com/compute/cuda/repos/rhel9/x86_64/cuda-rhel9.repo

# Install the driver

sudo dnf install -y nvidia-driver nvidia-driver-cuda

# Reboot

sudo reboot

# Verify

nvidia-smiFedora 40/41

# Using RPM Fusion (recommended for Fedora)

sudo dnf install -y https://download1.rpmfusion.org/free/fedora/rpmfusion-free-release-$(rpm -E %fedora).noarch.rpm

sudo dnf install -y https://download1.rpmfusion.org/nonfree/fedora/rpmfusion-nonfree-release-$(rpm -E %fedora).noarch.rpm

sudo dnf install -y akmod-nvidia xorg-x11-drv-nvidia-cuda

# Wait for the kernel module to build (may take several minutes)

sudo akmods --force

sudo dracut --force

sudo reboot

nvidia-smiImportant: The P40 Does NOT Support nvidia-open

NVIDIA's open-source kernel module (nvidia-open, also called nvidia-open-gpu-kernel-modules) only supports Turing architecture and newer (RTX 20-series, GTX 1650+, and all datacenter GPUs from T4 onward). The P40 is Pascal, which requires the proprietary kernel module. If your distribution defaults to installing nvidia-open, you need to explicitly select the proprietary variant.

# Check which kernel module is loaded

lsmod | grep nvidia

# You should see "nvidia" not "nvidia_open"

# If nvidia-open was installed incorrectly:

# Ubuntu:

sudo apt remove -y nvidia-kernel-open-560

sudo apt install -y nvidia-kernel-source-560

# RHEL/Fedora:

sudo dnf remove -y nvidia-driver-open

sudo dnf install -y nvidia-driverPower Management and Persistence Mode

The P40 draws up to 250W at full load, but during LLM inference it typically uses 150–200W because inference is memory-bandwidth-limited, not compute-limited. Proper power management reduces electricity costs and heat output without meaningful performance loss.

Enable Persistence Mode

Persistence mode keeps the NVIDIA driver loaded even when no GPU application is running. Without it, the driver unloads and reloads on every Ollama request, adding 1–3 seconds of latency to the first request after an idle period.

# Enable persistence mode (temporary, until reboot)

sudo nvidia-smi -pm 1

# Make it permanent via systemd service

sudo systemctl enable nvidia-persistenced

sudo systemctl start nvidia-persistenced

# Verify

nvidia-smi -q | grep "Persistence Mode"

# Should show: Persistence Mode : EnabledSet Power Limit

You can cap the P40's power consumption without a proportional performance loss. Reducing from 250W to 200W typically costs less than 5% performance but saves 50W of heat and electricity.

# Check current power limit

nvidia-smi -q | grep "Power Limit"

# Set power limit to 200W (range: 100W-250W)

sudo nvidia-smi -pl 200

# For the most efficient operation with minimal performance impact:

sudo nvidia-smi -pl 180

# This saves ~70W vs default while losing roughly 8-10% token generation speed

# Make power limit persist across reboots with a systemd service

sudo tee /etc/systemd/system/nvidia-power-limit.service << 'EOF'

[Unit]

Description=Set NVIDIA GPU Power Limit

After=nvidia-persistenced.service

[Service]

Type=oneshot

ExecStart=/usr/bin/nvidia-smi -pl 200

RemainAfterExit=yes

[Install]

WantedBy=multi-user.target

EOF

sudo systemctl enable nvidia-power-limit.service

sudo systemctl start nvidia-power-limit.serviceGPU Clock Management

# Lock GPU clocks to maximum for consistent performance during inference

# (prevents clock frequency fluctuations that cause variable latency)

sudo nvidia-smi -lgc 1531,1531 # P40 max boost clock is 1531 MHz

# Reset to default (let the GPU manage its own clocks)

sudo nvidia-smi -rgc

# For 24/7 servers, locking clocks is recommended because it eliminates

# the 100-200ms ramp-up time when the GPU transitions from idle to boostInstalling and Running Ollama on the P40

# Install Ollama

curl -fsSL https://ollama.com/install.sh | sh

# Verify GPU detection

ollama run llama3.2:1b "What GPU are you running on?"

# Check that the model loaded into GPU VRAM

nvidia-smi

# You should see VRAM usage (e.g., 1200MiB for llama3.2:1b)Pulling and Testing Models

# Start with a small model to verify everything works

ollama pull llama3.2:3b

ollama run llama3.2:3b "Explain the Linux boot process in one paragraph."

# Move to the 8B sweet spot

ollama pull llama3.1:8b

ollama run llama3.1:8b "Write a bash script that monitors disk usage and sends an alert."

# Test a 14B model (fits comfortably in 24 GB)

ollama pull deepseek-r1:14b

ollama run deepseek-r1:14b "Analyze the time complexity of quicksort."

# Try Qwen 2.5 32B at Q4 (~18.5 GB VRAM — fits in 24 GB with room for context)

ollama pull qwen2.5:32b

ollama run qwen2.5:32b "Compare the advantages of Btrfs and XFS for a database workload."Performance Benchmarks: P40 with Ollama

All benchmarks run on a P40 with driver 560.xx, CUDA 12.x, Ollama latest, persistence mode enabled, and 200W power limit. Test prompt: 800 input tokens, 400 output tokens generated.

Token Generation Speed by Model

| Model | Quantization | VRAM Used | Prompt Eval (t/s) | Token Gen (t/s) | Usable? |

|---|---|---|---|---|---|

| Llama 3.2 3B | Q4_K_M | ~2.2 GB | ~1,100 | ~48 | Excellent |

| Llama 3.1 8B | Q4_K_M | ~4.9 GB | ~680 | ~26 | Good |

| Llama 3.1 8B | Q5_K_M | ~5.7 GB | ~620 | ~23 | Good |

| Llama 3.1 8B | Q8_0 | ~8.5 GB | ~480 | ~18 | Acceptable |

| Mistral 7B v0.3 | Q4_K_M | ~4.4 GB | ~720 | ~28 | Good |

| DeepSeek-R1 14B | Q4_K_M | ~8.1 GB | ~420 | ~15 | Acceptable |

| Qwen 2.5 14B | Q4_K_M | ~8.3 GB | ~410 | ~14 | Acceptable |

| Qwen 2.5 32B | Q4_K_M | ~18.5 GB | ~240 | ~8 | Slow but works |

| Mixtral 8x7B | Q4_K_M | ~26.4 GB | Overflow to CPU | ~3 | Marginal |

| Llama 3.1 70B | Q4_K_M | ~40.6 GB | Mostly on CPU | ~1.5 | Not recommended |

The sweet spot for the P40 is the 7B–14B range. At these sizes, you get interactive speeds (14–28 tokens per second) with models that are genuinely useful for a wide range of tasks. The 32B models are usable for batch processing or tasks where you do not need instant responses. Anything above 32B parameters spills to CPU and becomes painfully slow.

Context Window Impact on Performance

Longer context windows consume more VRAM for the KV cache and slow down prompt evaluation. Here is how context length affects Llama 3.1 8B Q4_K_M on the P40:

| Context Length | Additional VRAM for KV Cache | Total VRAM | Token Gen (t/s) |

|---|---|---|---|

| 2,048 tokens | ~0.3 GB | ~5.2 GB | ~27 |

| 4,096 tokens | ~0.6 GB | ~5.5 GB | ~26 |

| 8,192 tokens | ~1.2 GB | ~6.1 GB | ~24 |

| 16,384 tokens | ~2.4 GB | ~7.3 GB | ~21 |

| 32,768 tokens | ~4.8 GB | ~9.7 GB | ~17 |

Even at the full 32K context, the P40 has enough VRAM to hold the 8B model plus the KV cache. Performance drops about 37% compared to the minimum context, which is noticeable but still usable.

Monitoring and Maintaining Your P40 Server

# Real-time GPU monitoring (update every 1 second)

watch -n 1 nvidia-smi

# Monitor GPU temperature, power, and utilization in CSV format

nvidia-smi --query-gpu=timestamp,temperature.gpu,power.draw,utilization.gpu,utilization.memory,memory.used,memory.total --format=csv -l 5

# Log GPU metrics to a file for long-term monitoring

nvidia-smi --query-gpu=timestamp,temperature.gpu,power.draw,utilization.gpu,memory.used --format=csv -l 60 >> /var/log/gpu-metrics.csv &

# Check GPU error counts (important for used hardware)

nvidia-smi -q | grep -A 5 "ECC Errors"

# Single-bit errors are normal and corrected automatically

# Double-bit errors indicate failing VRAM — consider replacing the card

# Check total power consumption over time

nvidia-smi -q | grep "Power Draw"Setting Up Alerts

# Simple temperature alert script

sudo tee /usr/local/bin/gpu-temp-alert.sh << 'SCRIPT'

#!/bin/bash

TEMP=$(nvidia-smi --query-gpu=temperature.gpu --format=csv,noheader,nounits)

if [ "$TEMP" -gt 85 ]; then

echo "GPU temperature critical: ${TEMP}°C" | \

systemd-cat -t gpu-alert -p emerg

# Optional: send email or webhook notification

fi

SCRIPT

sudo chmod +x /usr/local/bin/gpu-temp-alert.sh

# Run every 5 minutes via cron

echo "*/5 * * * * /usr/local/bin/gpu-temp-alert.sh" | sudo crontab -Which Models Run Best on the P40

Based on the benchmarks and practical usage, here are specific model recommendations for P40 users:

Best All-Around: Llama 3.1 8B (Q4_K_M)

The default recommendation. It fits comfortably with room for long context, generates tokens at a usable 26 t/s, and handles a wide range of tasks well: conversation, summarization, code assistance, writing. Pull it with ollama pull llama3.1:8b.

Best for Code: DeepSeek Coder V2 Lite (16B, Q4_K_M)

Strong code generation and understanding. Uses about 9.5 GB VRAM at Q4, leaving room for context. Runs at ~13 t/s on the P40 — slower than the 8B models but the code quality is noticeably better. Pull with ollama pull deepseek-coder-v2:16b.

Best for Reasoning: DeepSeek-R1 14B (Q4_K_M)

Excellent at multi-step reasoning, math, and analytical tasks. The chain-of-thought approach produces longer outputs (more tokens), so the 15 t/s generation speed means responses take longer. Worth the wait for complex problems. Pull with ollama pull deepseek-r1:14b.

Best Quality (If You Can Wait): Qwen 2.5 32B (Q4_K_M)

The highest-quality model that fits in the P40's 24 GB. At 8 t/s, it is slow for interactive chat but excellent for batch processing, document analysis, and tasks where you fire off a request and check back in 30 seconds. Quality approaches GPT-4o-mini levels on many benchmarks. Pull with ollama pull qwen2.5:32b.

Best for Multilingual: Qwen 2.5 14B (Q4_K_M)

If you work with non-English text, Qwen 2.5 has the strongest multilingual capabilities at this size. Particularly good with Chinese, Japanese, Korean, and European languages. 14 t/s on the P40. Pull with ollama pull qwen2.5:14b.

Tips for Getting the Most Out of a P40 Build

System RAM Matters for Large Models

# If a model exceeds 24 GB VRAM, Ollama offloads layers to system RAM

# This works but is slow — system RAM bandwidth (~40 GB/s DDR4) is 8x slower than VRAM

# Having 32-64 GB system RAM lets you run larger models with partial offloading

# Example: Mixtral 8x7B needs ~26 GB total

# P40 holds ~23 GB in VRAM, remaining ~3 GB goes to system RAM

# This works if you have sufficient RAM, but expect slower performance

# Check how Ollama is splitting layers between GPU and CPU:

OLLAMA_DEBUG=1 ollama run mixtral:8x7b "test" 2>&1 | grep -i "layer\|offload"Use an SSD for Model Storage

# Models are stored in ~/.ollama/models/ (or /usr/share/ollama/.ollama/models/ for the service)

# A 7B Q4 model is ~4.5 GB, a 70B Q4 model is ~40 GB

# Loading a model from HDD takes 30-60 seconds; from SSD it takes 3-8 seconds

# NVMe vs SATA SSD makes little difference for model loading (both are fast enough)

# Check where models are stored and how much space they use

du -sh /usr/share/ollama/.ollama/models/

# Move model storage to a different drive if needed

sudo systemctl stop ollama

sudo mv /usr/share/ollama/.ollama/models /mnt/ssd/ollama-models

sudo ln -s /mnt/ssd/ollama-models /usr/share/ollama/.ollama/models

sudo chown -R ollama:ollama /mnt/ssd/ollama-models

sudo systemctl start ollamaRun Ollama as an API Server

# Ollama runs a REST API on port 11434 by default

# You can use it from any application that speaks HTTP

# Test the API

curl http://localhost:11434/api/generate -d '{

"model": "llama3.1:8b",

"prompt": "What is the P40 GPU?",

"stream": false

}'

# For remote access, bind to all interfaces (careful with firewall!)

# Edit the Ollama service:

sudo systemctl edit ollama

# Add:

# [Service]

# Environment="OLLAMA_HOST=0.0.0.0:11434"

# Then restrict access with firewall rules

sudo firewall-cmd --add-rich-rule='rule family="ipv4" source address="192.168.1.0/24" port port="11434" protocol="tcp" accept' --permanent

sudo firewall-cmd --reloadFrequently Asked Questions

Related Articles

- Best GPU for Running LLMs Locally on Linux: 2026 Buyer's Guide

- Install NVIDIA Drivers and CUDA on Linux Server for AI: The No-Nonsense Guide (2026)

- Ollama GPU Memory Not Enough: Complete Troubleshooting Guide for Linux

- Power Consumption: Running LLMs 24/7 on Linux — Real Electricity Costs

Further Reading

- Grootendorst, M. & Alammar, J. (2025). An Illustrated Guide to AI Agents. O'Reilly Media. Covers agent memory systems, RAG pipelines, tool usage, MCP protocol, and context engineering with clear visual explanations.

- Ranjan, R. et al. (2025). Agentic AI in Enterprise. Apress. Explores enterprise AI architecture, RAG vs fine-tuning trade-offs, vector databases, prompt engineering, GPU acceleration, and Kubernetes orchestration for production AI.

- Brousseau, D. & Sharp, T. (2025). LLMs in Production. Manning Publications. Covers neural network fundamentals, transformer architecture, GPU management, latency optimization, Kubernetes deployment, MLOps pipelines, embeddings, and model quantization.

Is a Tesla P40 for $100 too good to be true? What is the catch?

The P40's low price is real and reflects market dynamics, not defective hardware. These cards were deployed by the thousands in datacenters and are being sold as cloud providers upgrade to newer hardware. The "catches" are practical rather than quality-related: no display output (you need a separate GPU or integrated graphics for a monitor), passive cooling (requires an aftermarket cooler or server chassis), high power consumption (250W TDP), and older architecture (no FP16 tensor cores). None of these affect its usefulness for LLM inference via Ollama. The cards have typically been running in temperature-controlled datacenters, so they are often in better condition than consumer GPUs that spent years in gaming rigs.

Can I run the P40 without a cooler modification if I have good case airflow?

In a standard desktop case, no. The P40's passive heatsink requires direct, high-velocity airflow that desktop cases do not provide. Even cases with excellent airflow (like the Fractal Meshify or Lian Li Lancool mesh series) do not direct enough air over the GPU heatsink to prevent thermal throttling under sustained inference loads. You have three options: install an aftermarket GPU cooler ($25–$45), use a server chassis with built-in high-speed fans ($0 if included with used server), or zip-tie case fans directly above the GPU heatsink (ugly but works). The aftermarket cooler approach is the most reliable for desktop builds.

How loud is a P40-based server compared to a regular desktop?

If you use a desktop case with an aftermarket GPU cooler, noise levels are similar to any desktop PC — the GPU cooler adds one or two fans running at moderate speed. A used tower server (Dell T620, HP ML350) is significantly louder because server fans run at high RPM. Expect 40–50 dBA from a tower server at idle, spiking to 55–60 dBA during P40 inference (when the server detects higher temperatures and increases fan speed). For comparison, a normal desktop is around 30–35 dBA. If noise matters, go with the desktop build option and an aftermarket cooler.

Should I buy one P40 or two for a home LLM server?

Start with one. A single P40 with 24 GB VRAM handles the most practical use cases: all 7B–14B models comfortably and 32B models at Q4. Adding a second P40 gives you 48 GB total VRAM, which opens up Llama 3.1 70B at Q4 (~40 GB) — but model sharding across two PCIe-connected GPUs is slower than a single GPU because of inter-GPU communication overhead. If you specifically need 70B model support, a single A100 40 GB (around $600 used) is faster than dual P40s and simpler to manage. Dual P40s make sense if you want to run two different models simultaneously (one on each GPU) rather than sharding one large model.

How long will the P40 remain useful for running LLMs with Ollama?

The P40 will remain useful as long as 24 GB VRAM is sufficient for the models you want to run and NVIDIA maintains driver support for Pascal. Current driver support extends through at least the 560 series, and NVIDIA historically supports architectures for 8–10 years after release (Pascal launched in 2016). Model architecture trends favor the P40: quantization techniques continue to improve, meaning better quality at the same VRAM footprint. A Q4_K_M quantized model in 2026 is notably better quality than Q4 quantization from 2024. The 24 GB VRAM capacity will remain relevant for 7B–32B models for years. What will eventually make the P40 obsolete is not VRAM capacity but speed: as newer, faster cards become cheaper on the used market, the P40's 346 GB/s bandwidth will become the bottleneck that pushes users to upgrade.