AI

AI and machine learning on Linux — deploy LLMs, GPU setup, self-hosted AI tools, and intelligent automation for sysadmins and DevOps engineers.

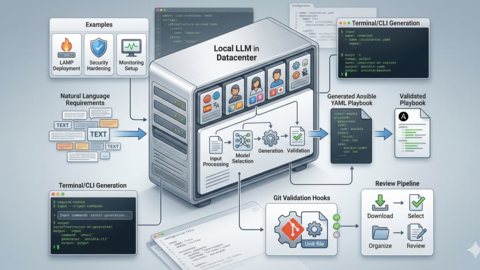

Generate Ansible Playbooks with Local LLMs: AI-Assisted Infrastructure as Code

Build a local CLI tool that generates Ansible playbooks from natural language using Ollama. No vendor lock-in, full...

AI-Powered Log Analysis on Linux: Use Ollama to Parse Syslog, Journald, and Application Logs

Use Ollama and local LLMs to analyze Linux logs from journalctl, auth.log, and nginx. Includes a Python log analyzer,...

ComfyUI on a Headless Linux Server: Stable Diffusion with API Access

Install and run ComfyUI on a headless Linux server for Stable Diffusion image generation. Covers Python venv setup,...

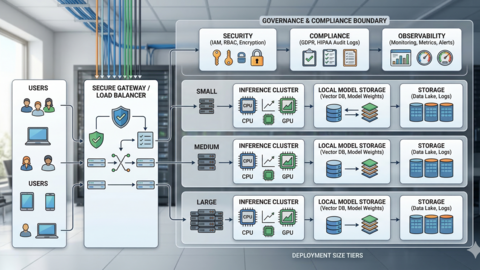

Private LLM for Enterprise: Linux Deployment Architecture and Security Guide

Enterprise reference architecture for self-hosted LLMs on Linux. Covers data sovereignty, hardware sizing, network...

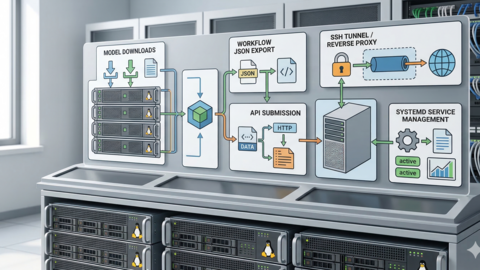

LocalAI on Linux: Deploy an OpenAI-Compatible API Server with Local Models

Set up LocalAI on Linux as a drop-in OpenAI API replacement. Covers Docker and binary installation, GGUF models, GPU...

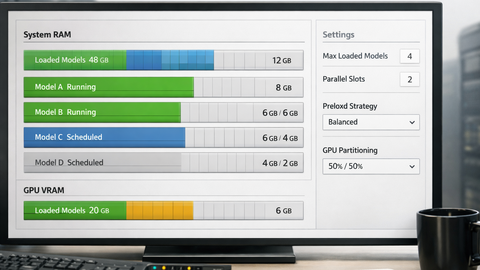

Running Multiple Ollama Models: Memory Management and Optimization Guide

Configure Ollama to run multiple models simultaneously on Linux with proper VRAM partitioning, memory scheduling, model...

Ollama Python API: Build Linux Administration Tools with Local LLMs

Use the Ollama Python library to build practical sysadmin tools — log analyzers, config generators, incident...

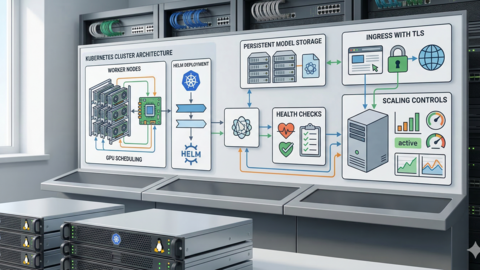

Kubernetes Ollama Deployment: Production GPU Scheduling and Scaling Guide

Deploy Ollama on Kubernetes with GPU scheduling via nvidia-device-plugin, Helm charts, PersistentVolumeClaims for model...

GPU Monitoring for AI Workloads on Linux: Tools, Dashboards, and Alerts

Deep dive into gpu monitoring linux ai tools: nvidia-smi, nvtop, gpustat, Prometheus exporters, and Grafana dashboards....

Ollama Behind Nginx: Reverse Proxy with Authentication, SSL, and Rate Limiting

Production guide to running Ollama behind Nginx with SSL termination, basic and API key authentication, per-IP rate...

AnythingLLM on Linux: Self-Hosted RAG with Ollama and Document Chat

Set up AnythingLLM on Linux with Docker or bare metal, connect Ollama for local inference, ingest documents for RAG,...

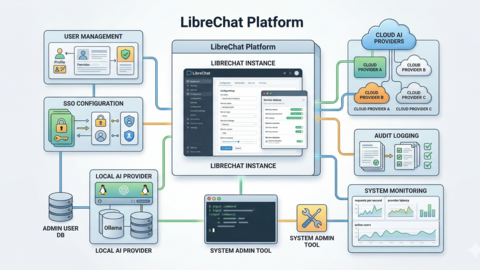

LibreChat on Linux: Complete Installation and Multi-Provider Configuration Guide

Deploy LibreChat on Linux with Docker Compose, connect Ollama, OpenAI, Anthropic, and Azure simultaneously, configure...