AI

AI and machine learning on Linux — deploy LLMs, GPU setup, self-hosted AI tools, and intelligent automation for sysadmins and DevOps engineers.

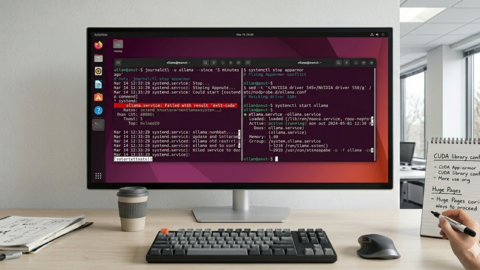

Ollama on Ubuntu 24.04 LTS: Known Issues, Fixes, and Optimization

Fix Ollama issues on Ubuntu 24.04 LTS. Covers NVIDIA driver problems, systemd config, AppArmor conflicts, CUDA...

LLM Security on Linux: Prompt Injection, API Auth, and Network Isolation

Secure self-hosted LLMs on Linux against prompt injection, API abuse, and network exposure. Covers input sanitization,...

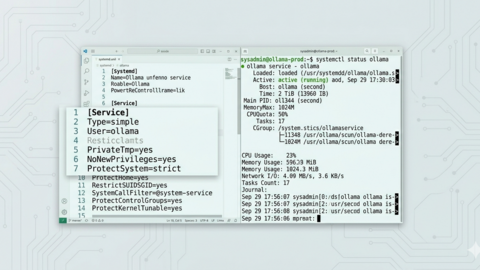

Ollama Systemd Service: Production Hardening and Performance Tuning

Harden and optimize your Ollama systemd service for production Linux deployments with security isolation, resource...

AI-Assisted Ansible Troubleshooting with Local LLMs on Linux

Use local LLMs with Ollama to troubleshoot Ansible failures on Linux. Build error analyzers, callback plugins, and...

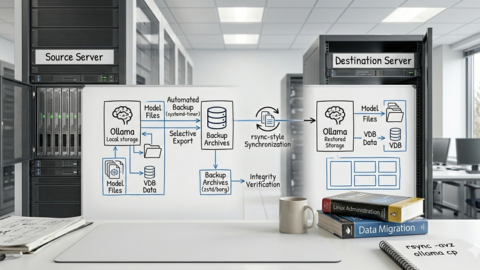

Ollama Backup and Migration: Move Models Between Linux Servers

Back up and migrate Ollama models between Linux servers. Covers storage layout, selective model export, rsync...

Whisper Speech-to-Text on Linux: Deploy a Self-Hosted Transcription Server

Deploy OpenAI Whisper as a self-hosted transcription server on Linux. Covers faster-whisper, REST API setup, GPU...

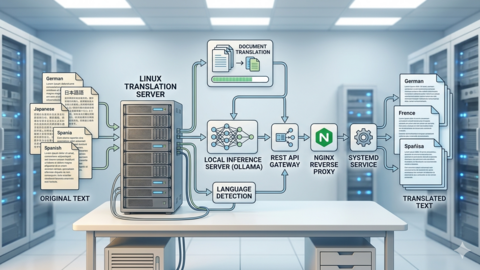

Self-Hosted AI Translation Server on Linux with Ollama

Build a self-hosted AI translation server on Linux with Ollama. Covers REST API setup, model selection for multilingual...

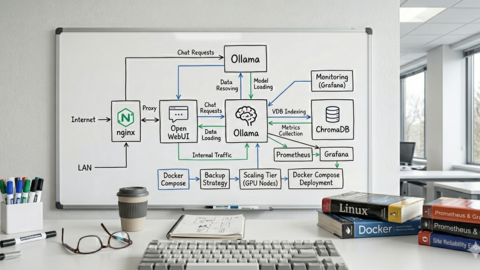

AI-Powered Monitoring and Alerting on Linux with Open Source Tools

Integrate local LLMs with Prometheus, Grafana, and Alertmanager on Linux to build intelligent monitoring that detects...

Docker GPU Passthrough on Linux for AI Workloads

Configure Docker GPU passthrough on Linux for AI containers. Covers NVIDIA Container Toolkit install, runtime...

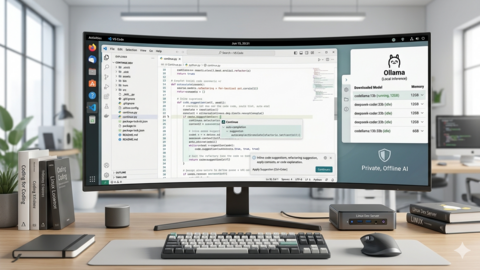

Continue.dev and Ollama: Self-Hosted AI Coding Assistant for VS Code on Linux

Set up Continue.dev with Ollama as a self-hosted AI coding assistant in VS Code on Linux. Covers config, model...

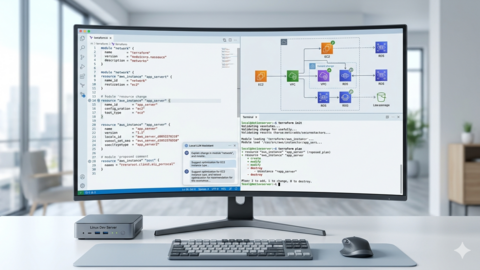

Terraform and Local LLMs: AI-Assisted Infrastructure as Code on Linux

Use local LLMs with Terraform on Linux to generate HCL configurations, validate plans, explain infrastructure changes,...

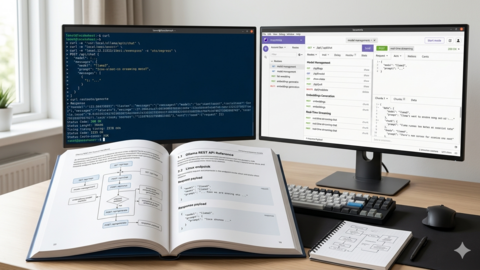

Ollama REST API Reference: Complete Endpoint Guide for Linux Developers

Complete Ollama REST API reference for Linux developers. Covers all endpoints: generate, chat, embeddings, model...