AI

AI and machine learning on Linux — deploy LLMs, GPU setup, self-hosted AI tools, and intelligent automation for sysadmins and DevOps engineers.

AI

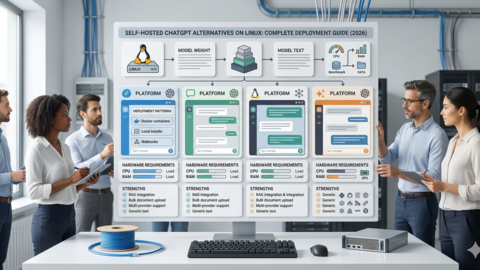

Self-Hosted ChatGPT Alternatives on Linux: Complete Deployment Guide (2026)

Deploy private ChatGPT alternatives on Linux with Ollama. Step-by-step install for Open WebUI, LibreChat, AnythingLLM,...

AI

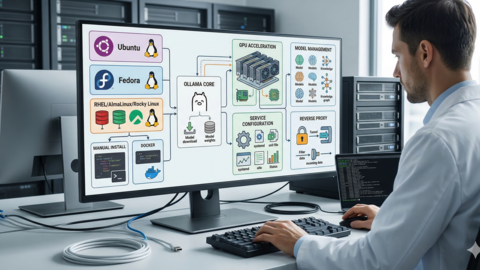

How to Install Ollama on Linux: Complete Guide for Ubuntu, Fedora, and RHEL (2026)

Step-by-step guide to install Ollama on Linux. Covers Ubuntu, Fedora, RHEL, Docker, GPU setup (NVIDIA/AMD), systemd...